You're Loading 66,000 Tokens of Plugins Before You Even Type. That's Why Your Limit Disappears.

You're Loading 66,000 Tokens of Plugins Before You Even Type

Main Thesis

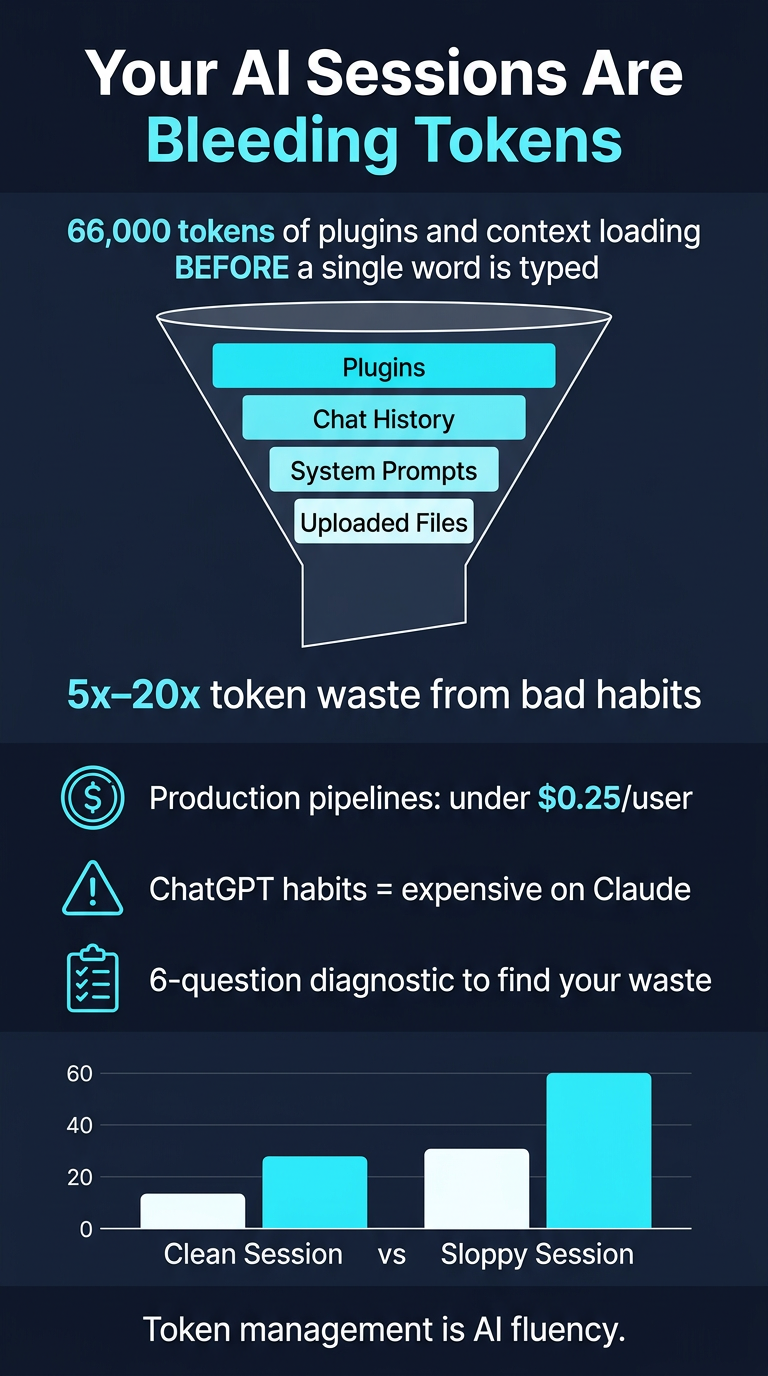

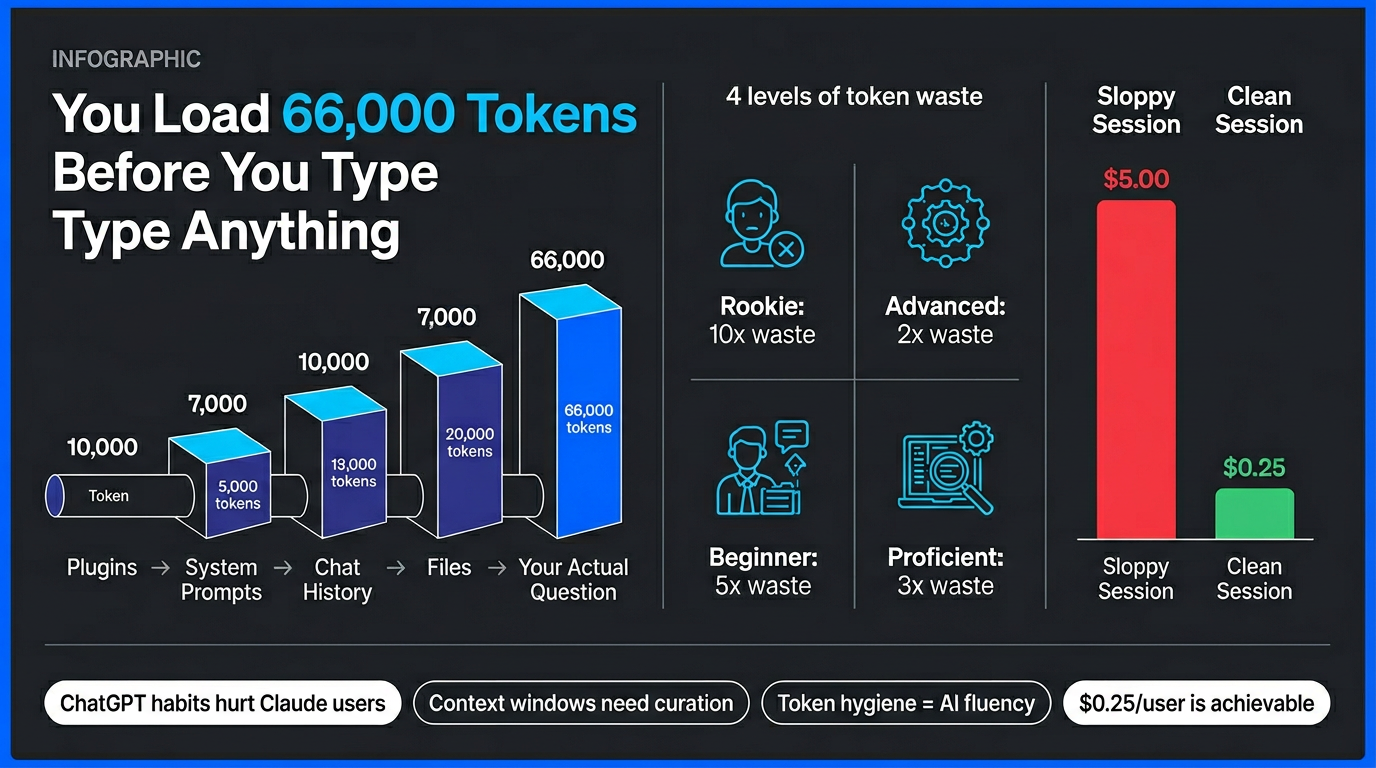

Most AI users are wasting 5x–20x more tokens (and money) than necessary due to bad habits, not because frontier AI is inherently expensive. A well-optimized production pipeline can serve a full personalized AI experience for less than $0.25 per user — yet most casual users burn far more just asking simple questions.

Key Findings

The Core Problem

- Users are unknowingly loading ~66,000 tokens of plugins, context, and baggage before typing a single word

- This is the primary reason Claude usage limits disappear so fast

- The models aren't expensive — your habits are expensive

The ChatGPT Migration Problem

- Habits formed using ChatGPT are catastrophically expensive when applied to Claude

- There is reportedly a single key fix that changes everything (paywalled)

Four Levels of Token Waste

Nate describes a hierarchy of waste from rookie to "advanced" users, with real numbers attached — even experienced users are often burning tokens unnecessarily

The Math Gap

- Clean vs. sloppy sessions have a dramatically widening cost gap

- This has real implications for usage limits and pricing tiers

Practical Takeaways

- Run a 6-question self-diagnostic to determine if you are the source of the waste

- Be intentional about what context, files, and plugins you load into sessions

- Nate is building tools to address this: "The Stupid Button," KISS Commandments, and a Heavy File Ingestion skill in his OB1 repo

- Token management is framed as a core indicator of AI fluency — mastering it separates effective AI users from inefficient ones

Bottom Line

The Claude usage limit crisis is partly real infrastructure strain, but partly self-inflicted by poor token hygiene. Learning to manage context windows deliberately is one of the highest-leverage skills an AI user can develop right now.