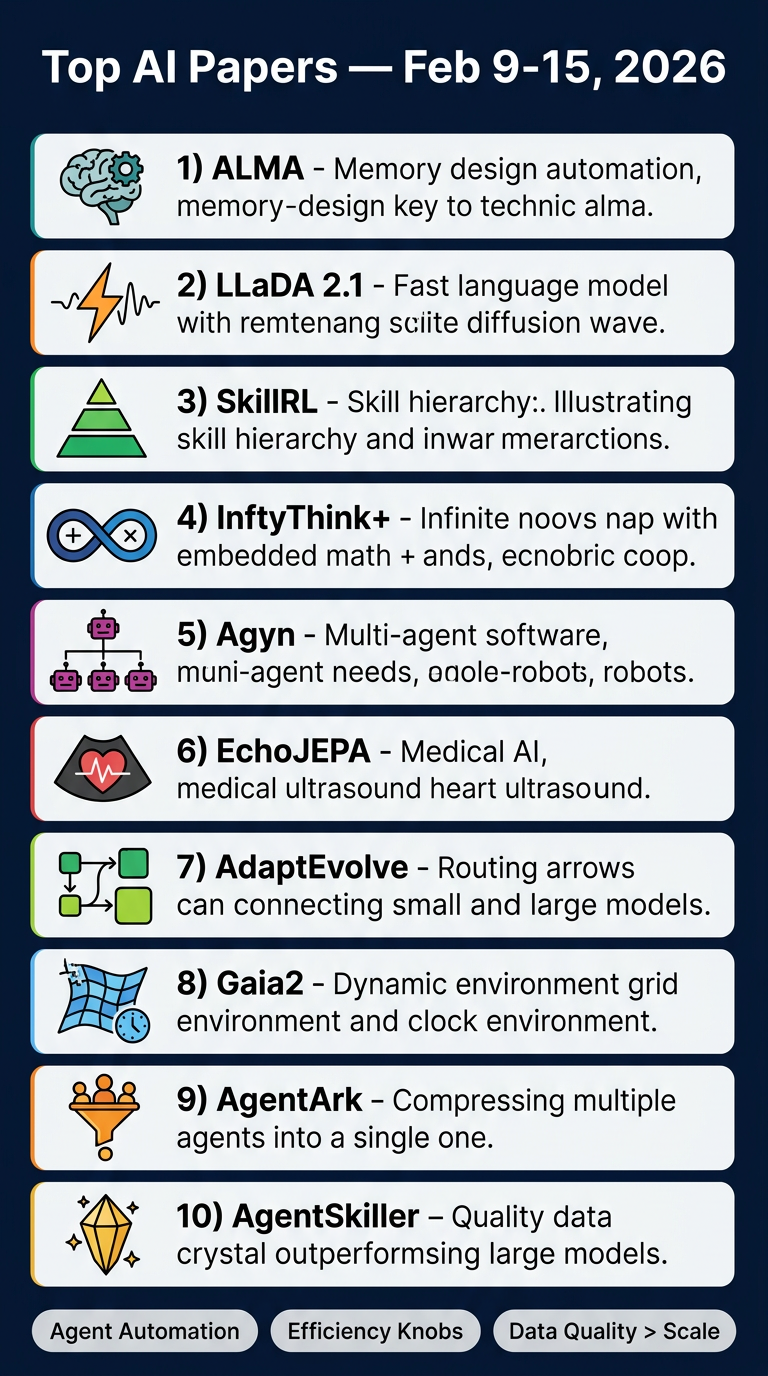

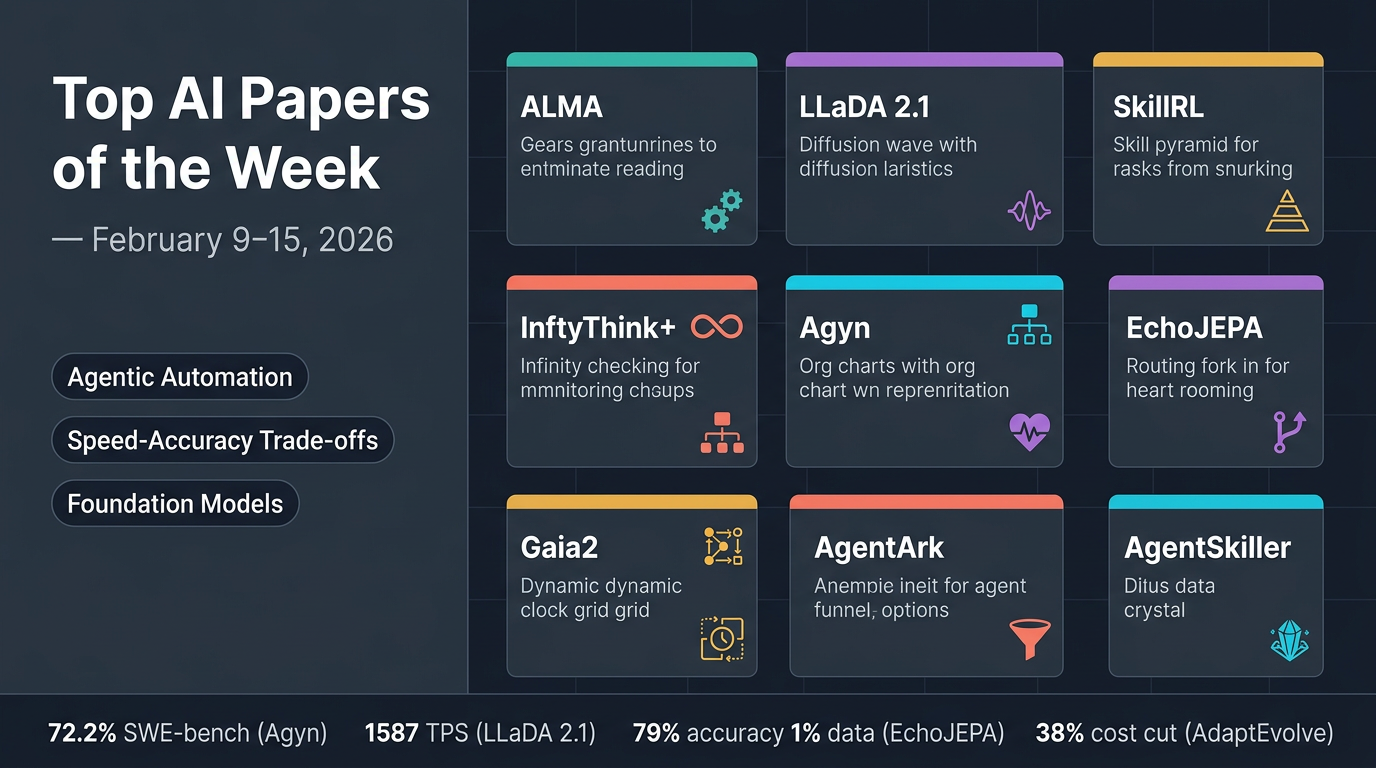

Top AI Papers of the Week

Top AI Papers of the Week (February 9–15, 2026)

From Elvis Saravia's AI Newsletter

This week's roundup covers ten significant AI research papers spanning agentic memory design, diffusion language models, reinforcement learning, medical AI, and multi-agent benchmarking.

1. ALMA — Automated Meta-Learning of Memory Designs for Agentic Systems

Main Thesis: Instead of hand-engineering memory modules for AI agents, ALMA uses a Meta Agent to automatically discover optimal memory architectures through open-ended exploration in code space.

Key Findings:

- Searches over database schemas, retrieval mechanisms, and update strategies as executable code

- Discovers domain-specific memory structures: affordance graphs (ALFWorld), task signature databases (TextWorld), strategy libraries (Baba Is AI), risk-interaction schemas (MiniHack)

- Achieves 12.3% avg success with GPT-5-nano vs 8.6% for best human baseline; 53.9% with GPT-5-mini vs 48.6%

- Designs scale better with experience and transfer across foundation models

Practical Takeaway: Memory design for agents can be automated — no more hand-crafted modules needed. Paper

2. LLaDA 2.1 — Discrete Diffusion Language Model Upgrade

Main Thesis: Ant Group's LLaDA 2.1 breaks the speed-quality trade-off in diffusion LLMs via Token-to-Token (T2T) editing and the first large-scale RL framework for diffusion models.

Key Findings:

- T2T editing allows correction of already-generated tokens, not just unmasking

- Two modes: Speedy Mode (max throughput) and Quality Mode (benchmark accuracy)

- LLaDA 2.1-Flash (100B) hits 892 tokens/sec on HumanEval+; Mini (16B) peaks at 1,587 TPS

- Introduces EBPO (Evidence-Based Policy Optimization) for stable RL training across 33 benchmarks

Practical Takeaway: Diffusion LLMs can now rival autoregressive models on both speed and quality with a configurable trade-off knob. Paper

3. SkillRL — Recursive Skill-Augmented Reinforcement Learning

Main Thesis: SkillRL bridges raw experience and policy improvement by distilling trajectories into reusable high-level behavioral skills that co-evolve with the agent policy.

Key Findings:

- Hierarchical SkillBank extracts reusable patterns from raw trajectories, reducing token footprint

- Dual retrieval strategy combines general heuristics with task-specific skills

- Recursive co-evolution: better skills → better performance → better training data

- 89.9% success on ALFWorld, 72.7% on WebShop, 47.1% avg on search-augmented QA — outperforming baselines by over 15.3%

Practical Takeaway: Storing distilled skills rather than raw trajectories dramatically improves agent efficiency and scalability. Paper

4. InftyThink+ — Infinite-Horizon Reasoning via RL

Main Thesis: InftyThink+ solves the quadratic cost, context length, and lost-in-the-middle problems of long chain-of-thought reasoning by training models to autonomously segment, summarize, and resume reasoning.

Key Findings:

- Decomposes reasoning into iterations connected by self-generated summaries

- Two-stage training: supervised cold-start on format → trajectory-level GRPO optimization

- 21-point accuracy gain on AIME24 (29.5% → 50.9%) vs vanilla long-CoT RL (38.8%)

- Adding an efficiency reward cuts token usage by 50% with modest accuracy trade-off

- Generalizes to GPQA Diamond and AIME25

Practical Takeaway: Teaching models when and how to summarize mid-reasoning dramatically improves both accuracy and inference speed. Paper

5. Agyn — Multi-Agent Software Engineering System

Main Thesis: Agyn models software engineering as an organizational process with specialized agents in distinct roles, achieving strong results without SWE-bench tuning.

Key Findings:

- Four agents: manager, researcher, engineer, reviewer — each with role-specific tools and models

- Reasoning-heavy roles use larger models; implementation roles use smaller, code-specialized models

- Dynamic workflow: manager decides iteration cycles based on intermediate outcomes

- 72.2% task resolution on SWE-bench 500, outperforming single-agent baselines by 7.4%

Practical Takeaway: Organizational design and agent infrastructure may matter as much as model quality for autonomous software engineering. Paper

6. EchoJEPA — Cardiac Foundation Model

Main Thesis: EchoJEPA is a JEPA-style foundation model trained on 18 million echocardiograms that learns clinically meaningful cardiac representations by predicting in latent space rather than pixel space.

Key Findings:

- Trained on 18M echos from 300K patients; ignores speckle noise and acoustic artifacts

- ~20% improvement in left ventricular ejection fraction estimation; ~17% in right ventricular systolic pressure estimation

- 79% view classification accuracy with only 1% labeled data (best baseline: 42% with full data)

- Only 2% degradation under acoustic perturbations vs 17% for competitors

- Zero-shot performance on pediatric patients exceeds fine-tuned baselines

Practical Takeaway: Latent-space predictive learning at scale produces robust, label-efficient cardiac AI that generalizes across patient populations. Paper

7. AdaptEvolve — Confidence-Driven Model Routing for Agentic Systems

Main Thesis: AdaptEvolve reduces the cost of iterative LLM-based refinement loops by dynamically routing easy sub-problems to smaller models and hard decisions to frontier models based on intrinsic generation confidence.

Key Findings:

- Monitors real-time generation confidence scores — no external controller needed

- Cuts inference costs by ~38% while retaining ~97.5% of upper-bound accuracy

- Model-agnostic and requires no task-specific tuning

- Makes evolutionary agent workflows viable for production deployment

Practical Takeaway: Confidence-based routing is a practical, plug-in efficiency mechanism for any iterative agentic pipeline. Paper

8. Gaia2 — Dynamic Agent Benchmark from Meta FAIR

Main Thesis: Gaia2 moves beyond static benchmarks by introducing environments that change independently of agent actions, testing temporal pressure, uncertainty, and multi-agent coordination.

Key Findings:

- GPT-5 leads at 42% pass@1 but struggles with time-constrained tasks

- Kimi-K2 leads open-source models at 21%

- Built on open-source Agents Research Environments (ARE) with action-level verifiers

- Represents a paradigm shift toward dynamic agentic evaluation

Practical Takeaway: Current frontier models still struggle significantly with dynamic, time-pressured environments — a major open research challenge. Paper

9. AgentArk — Distilling Multi-Agent Debate into a Single LLM

Main Thesis: AgentArk transfers the reasoning and self-correction abilities of multi-agent debate systems into a single model at training time, achieving near-multi-agent performance at a fraction of the cost.

Key Findings:

- Three distillation strategies: reasoning-enhanced SFT, trajectory-based augmentation, process-aware distillation with a process reward model

- Average 4.8% improvement over single-agent baselines across math and reasoning benchmarks

- Cross-family distillation (e.g., Qwen3-32B → LLaMA-3-8B) yields the largest gains

- Approaches full multi-agent performance at single-model inference cost

Practical Takeaway: You don't need to run multiple agents at inference time — their reasoning capabilities can be baked into a single smaller model. Paper

10. AgentSkiller — Scaling Generalist Tool-Use Agents via Data Quality

Main Thesis: AgentSkiller demonstrates that semantically integrated, high-quality synthetic training data matters more than parameter count for building strong tool-use agents.

Key Findings:

- Produces 11K high-quality synthetic trajectories across diverse tool-use scenarios

- 14B model beats GPT-o3 on tau2-bench (79.1% vs 68.4%)

- 4B variant outperforms 70B and 235B models

- Semantic integration across domains is the key differentiator

Practical Takeaway: Invest in data quality and semantic diversity — smaller, well-trained models can outperform much larger ones on agentic tool-use tasks. Paper

Overall Themes This Week

- Automation of agent design — from memory (ALMA) to skills (SkillRL) to multi-agent reasoning (AgentArk)

- Efficiency without quality loss — AdaptEvolve, LLaDA 2.1, and InftyThink+ all offer speed-accuracy knobs

- Data quality over scale — AgentSkiller challenges the parameter-scaling assumption

- Medical AI at foundation scale — EchoJEPA sets a new bar for label-efficient clinical models

- Dynamic benchmarking — Gaia2 pushes evaluation beyond static tasks toward real-world agentic challenges