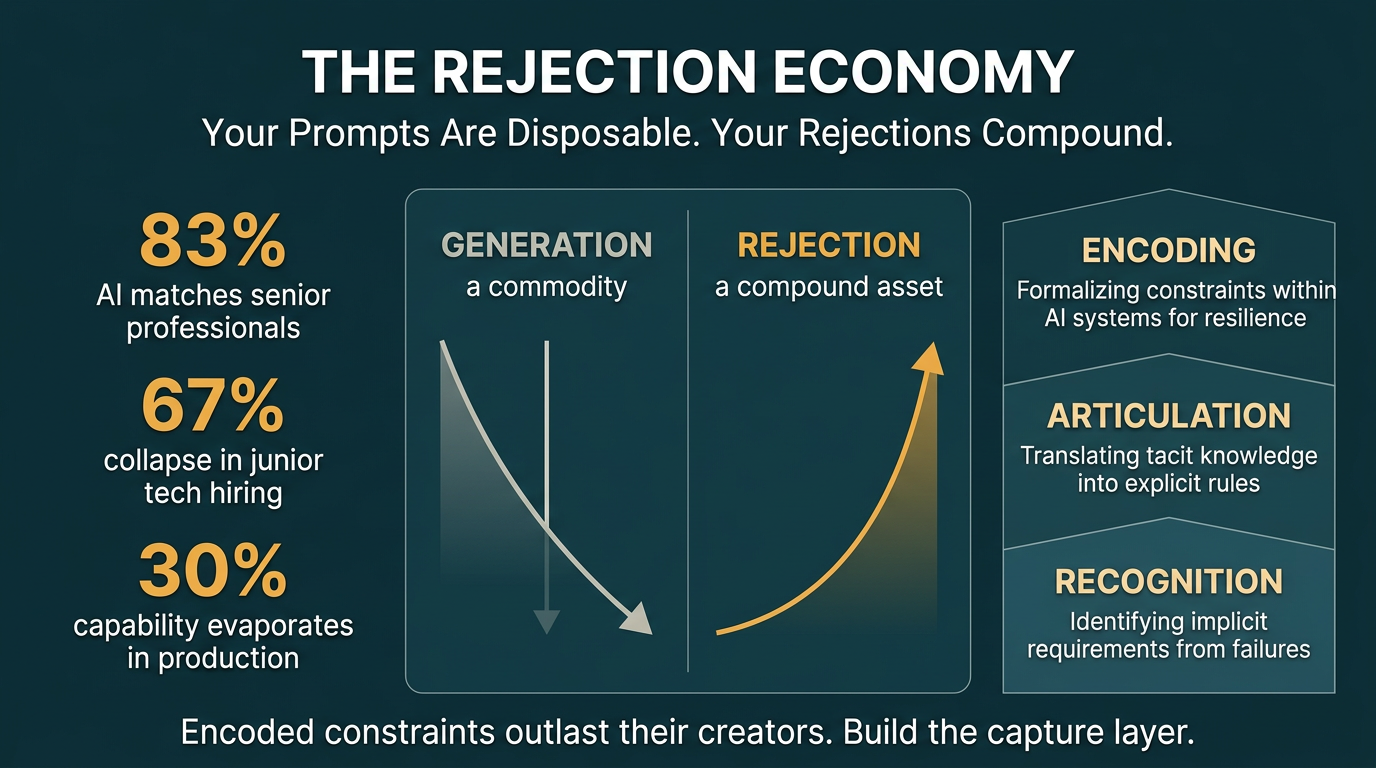

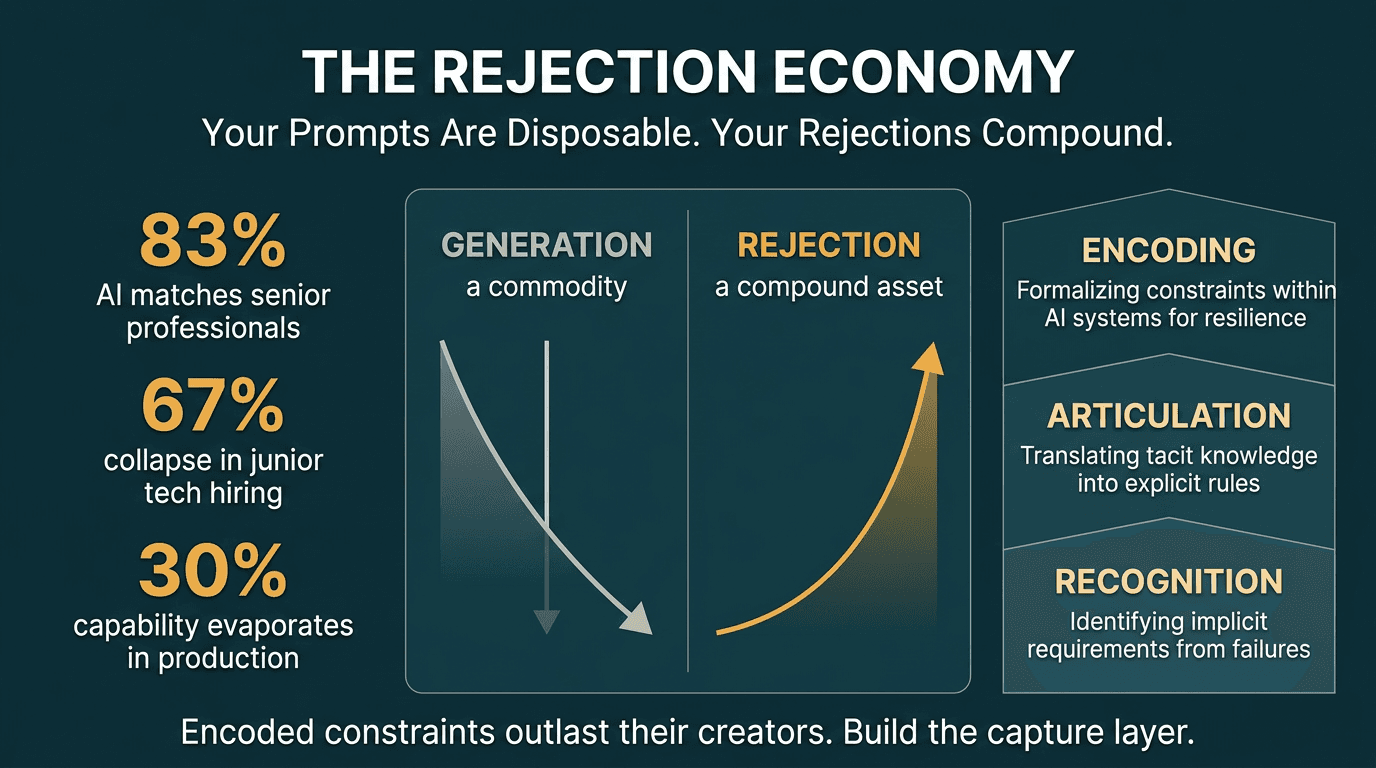

Your prompts are disposable. Your rejections compound. Here's the skill nobody is developing (+ the guide kit to start)

Original article: Read on Nate's Substack

Published by Nate B. Jones on March 11, 2026. Processed: March 11, 2026.

Summary

Main Thesis

The most valuable AI skill in 2026 is not prompting — it's rejection. When domain experts look at AI output, identify what's wrong, and articulate why, they create encoded constraints that compound into lasting competitive advantage. Generation is now a commodity; taste, built through thousands of expert rejections, is the durable moat.

Key Data Points & Findings

- 83% — AI now matches or beats professionals with 14 years average experience on 83% of head-to-head knowledge work comparisons (OpenAI GDPval benchmark), at 11x speed and under 1% of the cost.

- 30%+ — More than a third of demonstrated AI capability evaporates when agents move from lab benchmarks to production environments.

- 67% — Entry-level tech job postings collapsed ~67% in the two years following ChatGPT's release. Google and Meta are hiring ~50% fewer new graduates vs. 2021.

- 8–10% — Harvard study of 285,000 firms found junior employment drops 8–10% within six quarters of GenAI adoption, while senior employment barely changes.

- 46% — UK tech graduate roles fell 46% in 2024.

The Three Dimensions of Rejection as a Competency

Recognition — Detecting that something is wrong. This is domain expertise; it cannot be shortcut. Crucially, AI multiplies recognition — a domain expert with strong recognition can now evaluate 10x the output she could before. But only within her area of expertise; outside it, AI is a "confidence multiplier," which is worse.

Articulation — Explaining why something is wrong precisely enough to produce a reusable constraint. "This isn't right" is a rejection. "You can't treat a DSCR covenant the same as a minimum net worth requirement — they have completely different monitoring triggers" is a constraint. This is a learnable skill almost no organization is deliberately teaching.

Encoding — Making the constraint persist beyond the moment of rejection. This is where everything breaks down today. Articulated constraints live in email threads and Slack messages. They evaporate. The same rejection happens again tomorrow.

The Compounding Flywheel

Every properly encoded rejection reduces expert time needed for future verification. It's a flywheel:

AI generates → expert rejects → constraint encoded → library grows → next round costs less expert attention → repeat

Epic Systems is cited as the canonical example: 45 years of encoding clinical workflow constraints from thousands of hospitals resulted in 305 million patient records, near-zero churn, and structural switching costs. The moat isn't the software — it's the encoded judgment about what the software must get right, built rejection by rejection over decades.

The Encoding Gap (The Product Opportunity)

Every AI tool is built for generation. No one has built the capture layer for institutional taste. The product that would:

- Watch for rejection moments inside conversations

- Extract the constraint automatically

- Persist it durably

- Surface it on relevant future tasks

...doesn't exist yet. Nate calls this "the most important unsexy opportunity in AI."

The Seed Corn Problem

Eliminating junior hires eliminates the pipeline that builds recognition — the first and most critical rejection skill. Recognition requires years of reviewing real work (two thousand deals, hundreds of drafts). AI cannot compress that development. AWS CEO Matt Garman: "How's that going to work when ten years in the future you have no one that has learned anything?"

Today's juniors are tomorrow's domain experts whose taste the entire AI verification system depends on. A 67% hiring collapse means 67% fewer people developing the recognition skills the AI economy runs on.

Practical Takeaways by Role

Executives: The competitive moat is not which AI vendor you choose — models are commoditized. It's the depth and durability of your organization's encoded taste. Audit where your domain experts' rejections are evaporating. Treat encoded domain judgment as an asset class.

Managers: Create space for articulation. When someone rejects AI output, ask them to say why, and make that explanation visible. A team that articulates rejections builds shared quality standards that persist across projects and personnel changes.

Individual Contributors: Your most valuable professional development isn't the newest AI tool — tools change quarterly. Domain expertise compounds over years. Deepen your recognition. Practice articulating exactly why something is wrong in language precise enough that a system could act on it.

Product Builders: The generation side is a commodity. The evaluation side — infrastructure that captures human taste and makes it persistent, compounding, and organizationally durable — is where the next great company gets built.

Prompt Kit

Source: Companion Prompt Kit — "What Your AI Rejections Reveal"

Your taste is already in your conversation history. Every time you told an AI "that's not quite right," "make it less X," "don't do that," or rewrote its output entirely — you were encoding a preference. You just never captured it.

These five prompts surface those patterns, name them, and turn them into reusable constraints you actually own.

Works with any AI. No special setup required.

Prompt 1: Open the Audit

I want to identify my taste — the standards and preferences I hold that most people never articulate. To do that, I need to surface what I've rejected or corrected in AI output over time.

Before we start, ask me:

- What kind of work do I primarily use AI for? (writing, coding, strategy, communication, research — or a mix)

- Are there one or two domains where I use AI most heavily?

- Do I have a rough sense of anything I correct AI on repeatedly, even if I can't name why?

Ask me these questions and wait for my answers before doing anything else.

Prompt 2: Mine the History

Now let's find the actual rejection moments. I want you to do two things:

First, check whether you have the ability to search my conversation history or memory. If you do, search for moments where I: corrected your output, said something like "not quite," "too X," "less Y," rewrote something you gave me, or asked you to redo something. Look across all topics and time periods. List what you find — the specific corrections, not just summaries.

If you can't access my conversation history, tell me explicitly — something like "I don't have access to your past conversations, so let's do this through reflection instead." Then ask me: In the last few weeks, what's one thing an AI gave you that you changed or rejected? Walk me through what was wrong with it and what you changed it to.

If you did find history results, still ask me the reflection question too — there may be patterns you missed.

Gather both sources before moving on.

Prompt 3: Find the Pattern

Now look across everything we've surfaced. I want you to identify the underlying standards — not just the individual corrections, but what they have in common.

For each pattern you see, give me:

- A short name for the preference (3–6 words)

- What I reject (the failure mode)

- What I actually want (the positive version)

- One example from what we found

Try to find at least 3 patterns, no more than 8. These should feel like they describe me specifically — not generic AI advice. If something shows up once and seems like a one-off, leave it out. If something shows up in multiple corrections across different topics, that's a real preference.

Show me the patterns and ask if any feel off or missing before we move on.

Prompt 4: Write the Constraints

Now encode each pattern as a reusable constraint — something I could paste into a system prompt, share with a teammate, or add to a personal preferences file.

For each one, write it in this format:

[Preference Name]

Domain: [what kind of work this applies to]

Reject: [what to avoid — specific and observable]

Want: [what to do instead — specific and observable]

Type: [one of: domain rule / quality standard / business logic / formatting]

Make the "Reject" and "Want" fields concrete enough that a different AI — one that has never talked to me — would know exactly what to do. No vague adjectives. If you have to use a word like "clear" or "concise," follow it with an example of what clear or concise looks like for me specifically.

Prompt 5: Build the Taste Profile

Now pull everything together into a single taste profile — a document I can save, paste into system prompts, or share with anyone who needs to work in my voice or to my standards.

Format it as:

[My Name]'s Taste Profile

Last updated: [today's date]

Context: [2–3 sentences on my work and where I use AI most]

Core Preferences (one section per constraint from Prompt 4)

How to Use This

A 3–4 sentence note on how someone (or an AI) should apply this profile — when to invoke it, what it covers, what it doesn't.

After you write it, ask me: Is there anything here that feels wrong? Anything important that's missing? I want this to feel like it actually describes me, not a generic version of someone who uses AI.

Infographics