🥇Top AI Papers of the Week (March 9 - March 15)

Original article on Elvis Saravia's AI Newsletter

Processed: 2026-03-16

Summary

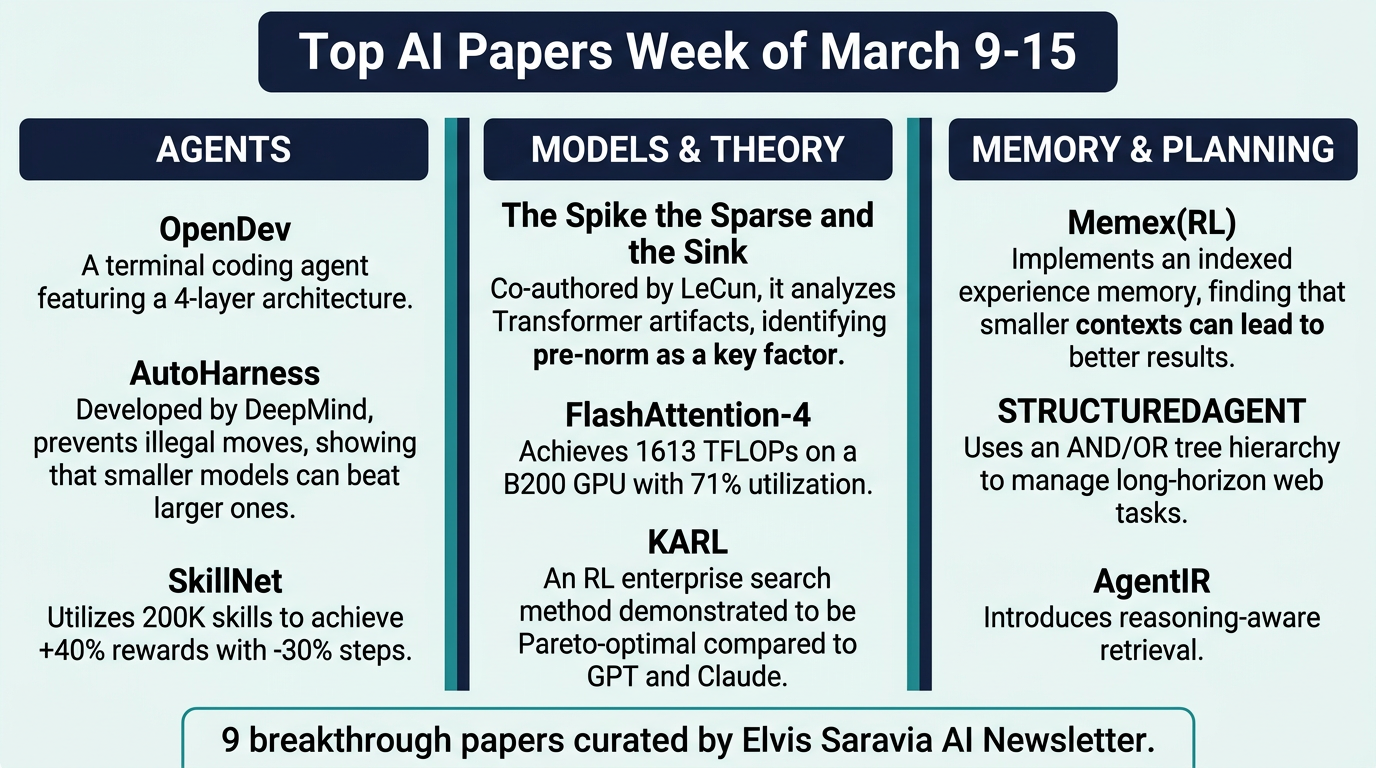

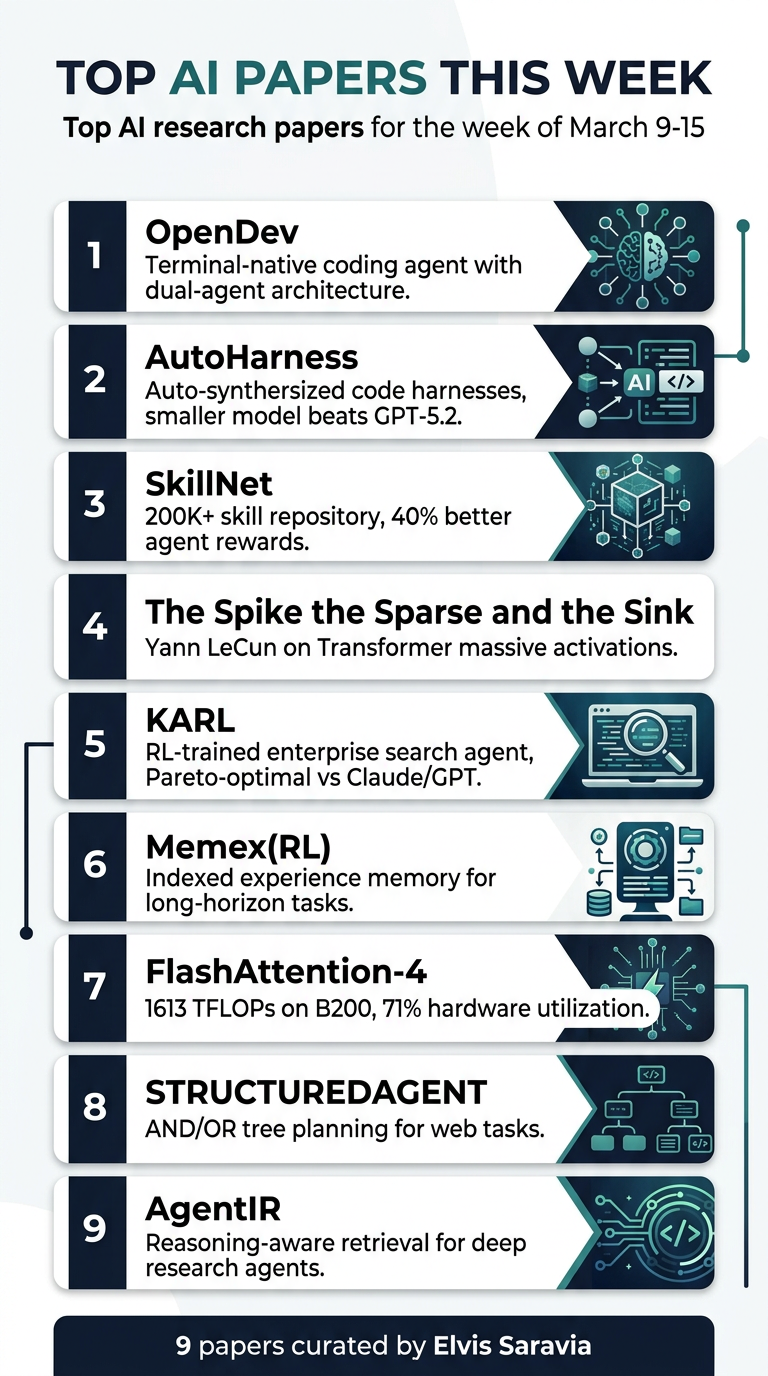

Elvis Saravia's weekly roundup of the top AI research papers, March 9–15. This week's 9 papers span terminal-native coding agents, automated harness engineering, large-scale skill repositories, Transformer internals, RL-trained search agents, long-horizon memory, next-gen attention kernels, hierarchical web planning, and reasoning-aware retrieval.

1. OpenDev

Terminal-native coding agents — an open-source, command-line coding agent with an 81-page technical report covering scaffolding, harness design, and context engineering.

- Dual-agent architecture: Separates planning from execution via compound AI with workload-specialized model routing. Multiple sub-agents independently bind to user-configured LLMs.

- Adaptive context compaction: Lazy tool discovery and adaptive methods reduce older observations to keep working memory lean as task complexity grows.

- Automated project memory: Event-driven reminders prevent instruction fade-out across sessions.

- Four-layer architecture: Agent reasoning → context engineering → tooling → persistence. Modular and independently evolvable.

2. AutoHarness

Google DeepMind — automatic synthesis of code harnesses that prevent LLM agents from making illegal actions.

- The insight: In Kaggle's GameArena chess competition, 78% of Gemini-2.5-Flash losses came from illegal moves, not poor strategy.

- Auto harness synthesis: Gemini-2.5-Flash generates a constraint harness through iterative refinement using environment feedback.

- Smaller beats larger: The harnessed Gemini-2.5-Flash outperforms Gemini-2.5-Pro and GPT-5.2-High on 16 TextArena single-player games.

- Complete illegal move prevention across 145 TextArena games — single and two-player.

- Key reframe: Agent improvement = harness engineering, not just model scaling.

3. SkillNet

Open infrastructure for creating, evaluating, and organizing AI skills at scale.

- Unified skill ontology: Structured from code libraries, prompt templates, and tool compositions with rich relational connections.

- 5-dimension evaluation: Safety, Completeness, Executability, Maintainability, Cost-awareness.

- 200,000+ skills in the repository with a Python toolkit for programmatic access.

- Results: +40% average rewards, −30% execution steps across ALFWorld, WebShop, and ScienceWorld.

4. The Spike, the Sparse and the Sink

Yann LeCun + NYU collaborators — dissecting massive activations and attention sinks in Transformer LMs.

- Massive activations operate globally, inducing near-constant hidden representations that function as implicit model parameters.

- Attention sinks operate locally, biasing individual heads toward short-range dependencies.

- Pre-norm is the culprit: The pre-norm configuration common in modern Transformers is the key architectural element enabling co-occurrence of these phenomena.

- Practical impact: Direct consequences for model compression, quantization, and KV-cache optimization — many efficiency techniques fail when they disrupt these patterns.

- Key finding: These phenomena are design-dependent artifacts, not fundamental requirements.

5. KARL

Databricks — RL-trained enterprise search agent achieving state-of-the-art across diverse hard-to-verify agentic search tasks. Introduces KARLBench spanning 6 search domains.

- OAPL post-training paradigm: Iterative large-batch off-policy RL, robust to trainer/inference engine discrepancies without clipped importance weighting.

- Multi-task heterogeneous training: Constraint-driven entity search, cross-document synthesis, tabular reasoning, entity retrieval, procedural reasoning, fact aggregation.

- Pareto-optimal: KARL outperforms Claude 4.6 and GPT 5.2 on KARLBench across cost-quality and latency-quality tradeoffs.

- Scores: KARL-BCP hits 59.6 on BrowseComp-Plus (70.4 with value-guided search); KARL-TREC reaches 85.0 on TREC-Biogen.

6. Memex(RL)

Indexed experience memory for scaling agent capability on long-horizon tasks.

- Indexed memory: Compact working context with concise structured summaries + stable indices; full-fidelity interactions stored externally. Agent decides what to summarize, archive, index, and retrieve.

- RL-optimized memory ops: MemexRL optimizes both write and read behaviors with reward shaping under a context budget — agents learn to manage their own memory strategically.

- Bounded retrieval complexity: Theoretical guarantees on decision quality with manageable computational load as history grows.

- Result: Higher task success on long-horizon tasks with significantly smaller working context than baselines. Less context, used intelligently, beats brute-force expansion.

7. FlashAttention-4

Hardware-algorithm co-design for B200 and GB200 GPUs (Blackwell architecture).

- 1613 TFLOPs/s at 71% hardware utilization on B200 with BF16.

- 1.3x speedup over cuDNN 9.13; 2.7x over Triton.

- Asymmetric scaling solutions: Redesigned pipelines exploit fully asynchronous matrix multiply, software-emulated exponential/conditional softmax rescaling, tensor memory to reduce shared memory traffic.

- Python-native (CuTe-DSL): 20–30x faster compile times vs. C++ template approaches.

- Key lesson: Next-gen GPU architectures demand fundamentally new kernel designs — Hopper techniques leave significant performance on the table on Blackwell.

8. STRUCTUREDAGENT

Hierarchical planning for long-horizon web tasks using dynamic AND/OR trees.

- Planning tree construction/maintenance separated from LLM invocation — LLM only handles local operations (node expansion, repair).

- Structured memory module tracks candidate solutions for better constraint satisfaction.

- Improved performance over standard LLM-based web agents on WebVoyager, WebArena, and custom shopping benchmarks.

- Added benefit: interpretable hierarchical plans for easier debugging and human intervention.

9. AgentIR

Reasoning-aware retrieval for deep research agents.

- Deep research agents generate explicit reasoning before every search call — existing retrievers ignore this rich intent signal entirely.

- AgentIR jointly embeds the agent's reasoning trace alongside its query.

- AgentIR-4B achieves 68% accuracy with Tongyi-DeepResearch vs. 50% with conventional embedding models twice its size and 37% with BM25.

Infographics