Top AI Papers of the Week

Top AI Papers of the Week (Feb 23 – Mar 1, 2026)

A roundup of the most impactful AI research papers from Elvis Saravia's weekly newsletter, spanning reasoning efficiency, agent infrastructure, algorithm discovery, and more.

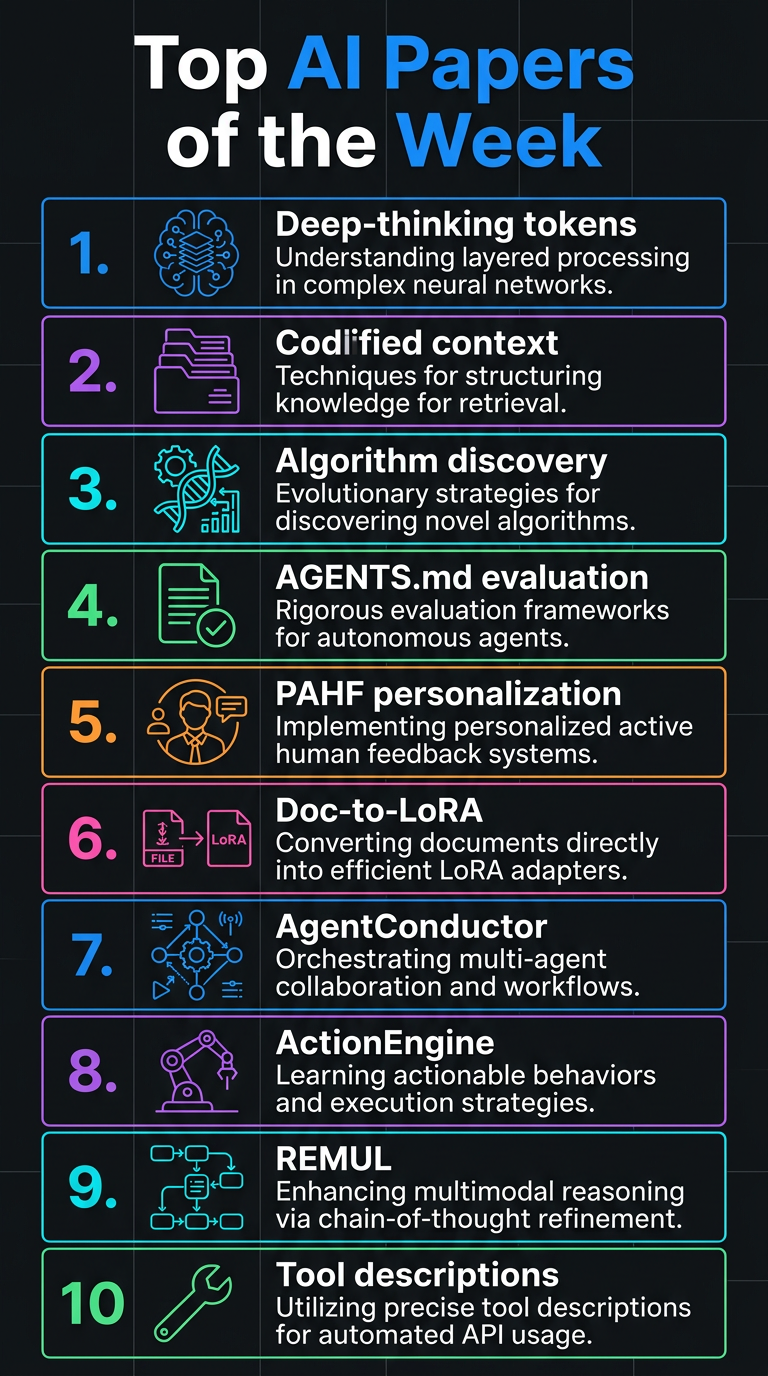

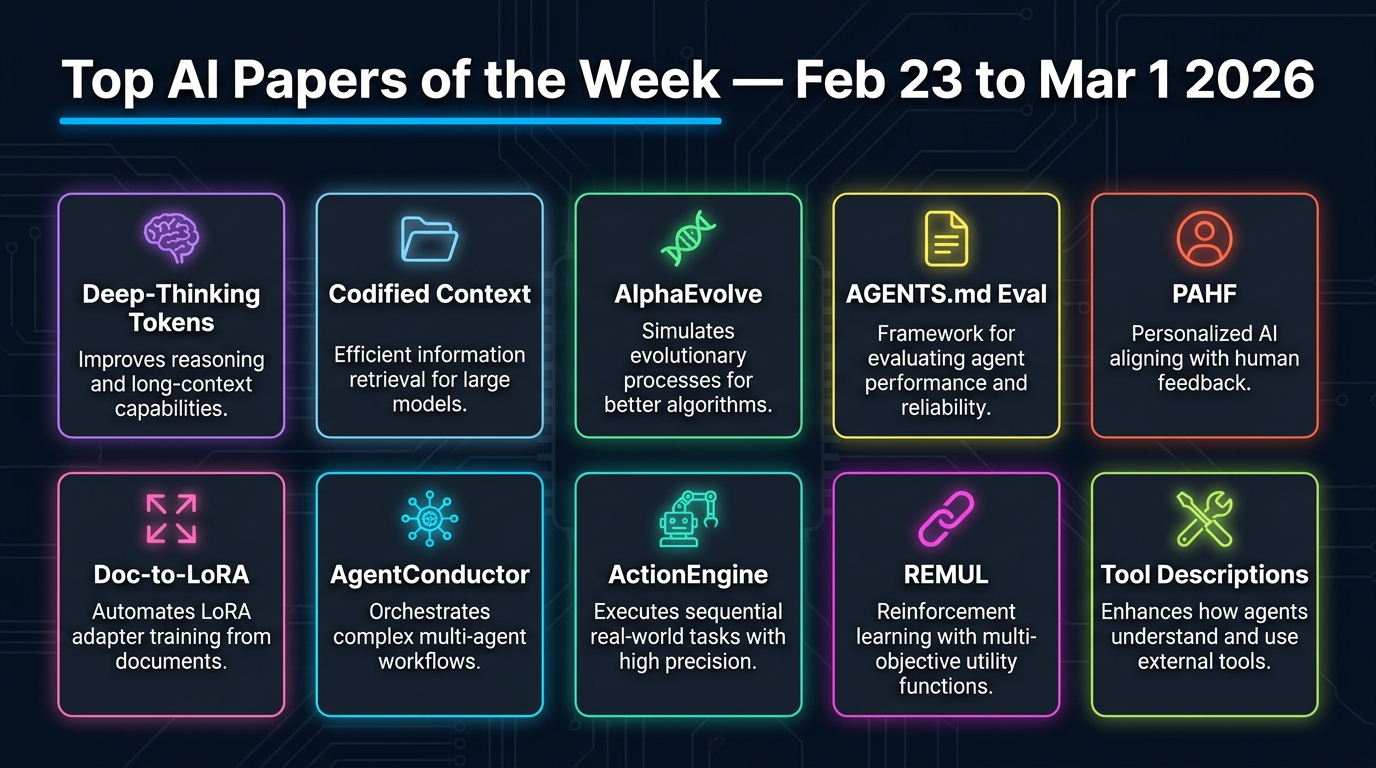

1. Deep-Thinking Tokens

Google researchers challenge the assumption that longer outputs mean better reasoning. They introduce deep-thinking tokens — tokens where internal model predictions shift significantly across layers before stabilising — measured via Jensen-Shannon divergence between intermediate and final layer distributions. A token qualifies as "deep-thinking" if its prediction only stabilises in the final 15% of layers.

- Raw token count negatively correlates with accuracy (r = -0.59)

- Deep-thinking ratio shows a positive correlation (r = 0.683)

- Think@n test-time scaling strategy uses high deep-thinking ratio samples to match/exceed self-consistency performance while cutting inference costs ~50%

- Validated on AIME 24/25, HMMT 25, GPQA-diamond with GPT-OSS, DeepSeek-R1, Qwen3

Takeaway: Generate tokens that require deeper internal computation, not just more tokens.

2. Codified Context

Single-file AGENTS.md manifests don't scale to large codebases. This paper presents a three-component infrastructure built for a 108,000-line C# distributed system, evaluated across 283 development sessions:

- Hot-memory constitution: A living document encoding conventions and orchestration protocols consulted at session start

- 19 domain-expert agents: Each owns a bounded codebase domain with its own context slice

- Cold-memory knowledge base: 34 on-demand specification documents retrieved only when needed

Takeaway: Tiered context management prevents agents from forgetting conventions and losing coherence on long-running projects.

3. Discovering Multi-Agent Learning Algorithms with LLMs

Google DeepMind uses AlphaEvolve, an evolutionary coding agent powered by LLMs, to automatically discover new multi-agent learning algorithms for imperfect-information games.

- VAD-CFR: A novel iterative regret minimisation variant with volatility-sensitive discounting and consistency-enforced optimism — outperforms Discounted Predictive CFR+

- SHOR-PSRO: A population-based training variant blending Optimistic Regret Matching with temperature-controlled strategy distributions

- Algorithms contain novel design choices human researchers hadn't previously considered

Takeaway: LLMs can serve as algorithmic designers, not just code generators, with potential applications in optimisation, scheduling, and resource allocation.

4. Evaluating AGENTS.md

This research evaluates whether AGENTS.md files actually improve AI coding agent performance. Testing Claude Code (Sonnet-4.5), Codex (GPT-5.2 & GPT-5.1 mini), and Qwen Code (Qwen3-30b-coder), the findings are counterintuitive:

- Human-written AGENTS.md: modest +4% improvement in some cases

- LLM-generated AGENTS.md: -2% performance hit

- Both consistently increase inference cost by 20%+

- Context files cause agents to explore more code paths but make tasks harder by introducing noise

Takeaway: Keep AGENTS.md minimal and focused on critical constraints only. Information density matters more than comprehensiveness.

5. PAHF — Personalized Agents from Human Feedback

Meta introduces PAHF, a continual agent personalisation framework coupling explicit per-user memory with proactive and reactive feedback mechanisms.

- Three-step loop: Pre-action clarification → grounding in retrieved preferences → post-action feedback integration

- Enables continual learning from live interactions without retraining

- Two novel benchmarks in embodied manipulation and online shopping measuring preference learning and adaptation

- Outperforms no-memory and single-channel baselines; reduces initial personalisation error and adapts rapidly to persona shifts

Takeaway: Combining persistent memory with dual feedback channels is essential for practical agent personalisation.

6. Doc-to-LoRA

Sakana AI introduces Doc-to-LoRA (D2L), a lightweight hypernetwork that meta-learns to compress long documents into LoRA adapters in a single forward pass.

- Converts documents into parameter-space representations, eliminating expensive re-processing

- Achieves near-perfect zero-shot accuracy on needle-in-a-haystack tasks at 4x beyond the target LLM's native context window

- Outperforms standard long-context approaches on QA datasets while consuming less memory

- Ideal for repeated-query applications: compress once, amortise cost across all queries

Takeaway: Parametric compression can extend context capabilities without architectural changes.

7. AgentConductor

AgentConductor is a reinforcement learning-enhanced multi-agent system for code generation that dynamically generates interaction topologies based on task characteristics.

- LLM-based orchestrator builds density-aware layered DAG topologies tailored to problem difficulty

- Simple problems → sparse topologies; complex problems → denser collaboration

- Outperforms strongest baseline by up to 14.6% in pass@1 accuracy with 13% density reduction and 68% token cost reduction

- Execution feedback refines topologies adaptively when initial solutions fail

Takeaway: Adaptive topology generation eliminates redundant agent communication and dramatically cuts costs.

8. ActionEngine

Georgia Tech and Microsoft Research introduce ActionEngine, a training-free framework transforming GUI agents from reactive executors into programmatic planners.

- Builds state-machine memory through offline exploration

- Synthesises executable Python programs for task completion

- Achieves 95% success on Reddit tasks from WebArena with on average a single LLM call

- 11.8x cost reduction and 2x latency reduction vs. vision-only baselines

9. CoT Faithfulness via REMUL

REMUL is a training approach making chain-of-thought reasoning more faithful and monitorable. A speaker model generates reasoning traces that multiple listener models attempt to follow, with RL rewarding reasoning understandable to other models.

- Improves three faithfulness metrics while boosting overall accuracy

- Produces shorter, more direct reasoning chains

- Tested on BIG-Bench Extra Hard, MuSR, ZebraLogicBench, and FOLIO

10. Learning to Rewrite Tool Descriptions

Intuit AI Research introduces Trace-Free+, a curriculum learning framework that optimises tool descriptions for LLM agents (not humans) without relying on execution traces.

- Consistent gains on unseen tools and strong cross-domain generalisation

- Robust as candidate tool count scales to over 100

- Demonstrates that improving tool interfaces complements agent fine-tuning

Key Themes This Week

- Efficiency over verbosity: Better reasoning comes from deeper computation, not more tokens

- Scalable agent infrastructure: Tiered memory and specialised agents beat monolithic context files

- LLMs as designers: Evolutionary LLM systems can discover novel algorithms autonomously

- Context file caveats: AGENTS.md files can hurt as much as help — keep them lean

- Personalisation at scale: Persistent memory + dual feedback is the blueprint for adaptive agents