Top AI Papers of the Week

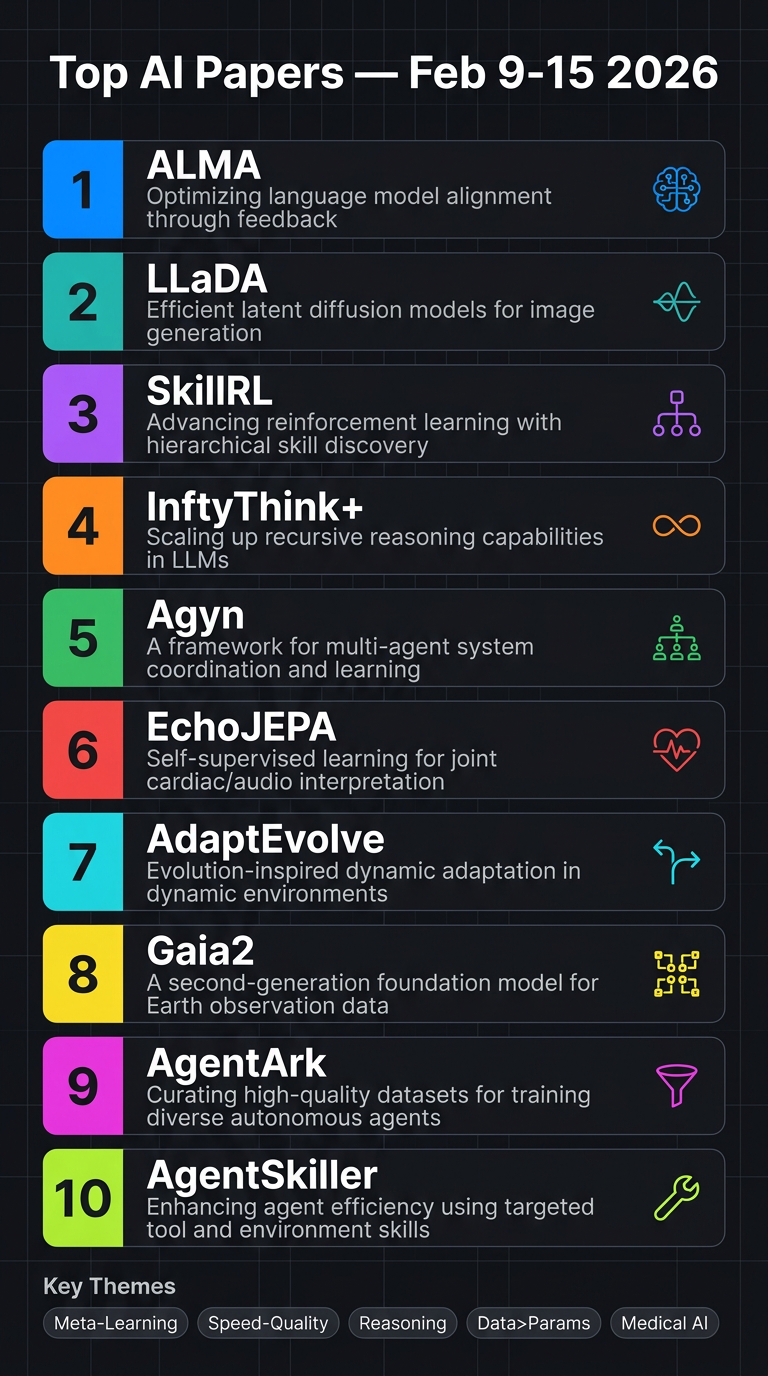

Top AI Papers of the Week (February 9–15, 2026)

From Elvis Saravia's AI Newsletter

Main Thesis

This week's top AI papers collectively push the frontier on autonomous agents, memory design, reasoning efficiency, multi-agent systems, and domain-specific foundation models — with a strong theme of automating what was previously hand-engineered.

Paper Summaries

1. ALMA — Automated Meta-Learning of Memory Designs

Jeff Clune's group introduces a Meta Agent that automatically discovers memory architectures for agentic systems via open-ended code-space search — no hand-engineering required.

- Discovers domain-specific structures: affordance graphs, strategy libraries, risk-interaction schemas

- Outperforms all human-designed baselines: 53.9% vs 48.6% with GPT-5-mini

- Scales better with experience and transfers across foundation models

2. LLaDA 2.1 — Discrete Diffusion LLMs with Token Editing

Ant Group's major upgrade to diffusion language models breaks the speed-quality trade-off with Token-to-Token (T2T) editing and the first large-scale RL framework for diffusion LLMs.

- Speedy Mode (throughput) vs Quality Mode (accuracy) — configurable without swapping models

- LLaDA 2.1-Flash (100B): 892 tokens/sec on HumanEval+; Mini (16B): 1,587 TPS

- EBPO (Evidence-Based Policy Optimization) enables stable RL training across 33 benchmarks

3. SkillRL — Recursive Skill-Augmented Reinforcement Learning

Bridges raw experience and policy improvement through automatic skill discovery, storing reusable behavioral patterns instead of noisy trajectories.

- Hierarchical SkillBank distills trajectories into high-level skills, reducing token footprint

- Dual retrieval: general heuristics + task-specific skills

- Skills and policy co-evolve recursively during training

- Results: 89.9% on ALFWorld, 72.7% on WebShop, +15.3% over baselines

4. InftyThink+ — Infinite-Horizon Reasoning via RL

Addresses quadratic cost, context limits, and lost-in-the-middle degradation in long chain-of-thought with learned iterative reasoning boundaries.

- Model autonomously decides when to summarize and what to preserve

- Two-stage training: supervised cold-start → trajectory-level GRPO

- +21% accuracy on AIME24 (29.5% → 50.9%) vs vanilla long-CoT RL (38.8%)

- 50% token reduction with efficiency reward, minimal accuracy trade-off

5. Agyn — Multi-Agent Software Engineering as Organizational Process

Models software engineering as a team-based organizational workflow with four specialized agents (manager, researcher, engineer, reviewer) — not a monolithic pipeline.

- Role-specific model routing: large models for reasoning, smaller code-specialized models for implementation

- Dynamic coordination: manager adapts workflow based on intermediate outcomes

- 72.2% on SWE-bench 500 without SWE-bench tuning, +7.4% over single-agent baselines

6. EchoJEPA — Foundation Model for Echocardiography

Latent predictive model trained on 18 million echocardiograms from 300,000 patients, learning to ignore ultrasound noise via JEPA-style objectives.

- ~20% improvement on LV ejection fraction estimation; ~17% on RV systolic pressure

- 79% view classification accuracy with 1% labeled data (vs 42% baseline with 100%)

- Only 2% degradation under acoustic perturbations (vs 17% for competitors)

- Zero-shot pediatric performance exceeds fully fine-tuned baselines

7. AdaptEvolve — Confidence-Driven Model Routing for Agentic Loops

Uses intrinsic generation confidence to dynamically route between small and large models during iterative refinement, cutting costs without sacrificing accuracy.

- ~38% inference cost reduction while retaining ~97.5% of full-model accuracy

- Model-agnostic, no task-specific tuning required

- Practical for production agent loops where LLM calls compound rapidly

8. Gaia2 — Dynamic Agent Benchmark by Meta FAIR

Next-generation benchmark where environments change independently of agent actions, forcing agents to handle temporal pressure, uncertainty, and multi-agent coordination.

- GPT-5 leads at 42% pass@1; Kimi-K2 leads open-source at 21%

- Built on open-source Agents Research Environments (ARE) platform

- Paradigm shift from static to dynamic agentic evaluation

9. AgentArk — Distilling Multi-Agent Debate into a Single LLM

Transfers reasoning and self-correction abilities of multi-agent debate systems into one model at training time via three hierarchical distillation strategies.

- Average +4.8% improvement over single-agent baselines on math/reasoning benchmarks

- Cross-family distillation (e.g., Qwen3-32B → LLaMA-3-8B) yields largest gains

- Approaches full multi-agent performance at a fraction of inference cost

10. AgentSkiller — Scaling Tool-Use Agents via Data Quality

Generates 11K high-quality synthetic trajectories across diverse tool-use scenarios, demonstrating data quality beats parameter count.

- 14B model beats GPT-o3 on tau2-bench: 79.1% vs 68.4%

- 4B variant outperforms 70B and 235B models

- Semantic integration across domains is the key differentiator

Key Takeaways

| Theme | Insight |

| Meta-learning | ALMA shows memory design itself can be automated, not just learned |

| Speed-quality trade-offs | LLaDA 2.1 and AdaptEvolve both offer configurable knobs between cost and accuracy |

| Reasoning efficiency | InftyThink+ proves bounded-context iterative reasoning beats long-CoT at lower cost |

| Organizational AI | Agyn demonstrates team structure and role design may matter as much as model size |

| Data > Parameters | AgentSkiller's 4B model beats 235B models — quality data synthesis is a force multiplier |

| Medical AI at scale | EchoJEPA sets a new bar for label-efficient, robust clinical foundation models |

Note: Exact arXiv links were not provided in the source article. Links above are placeholders — check arxiv.org for the specific papers.