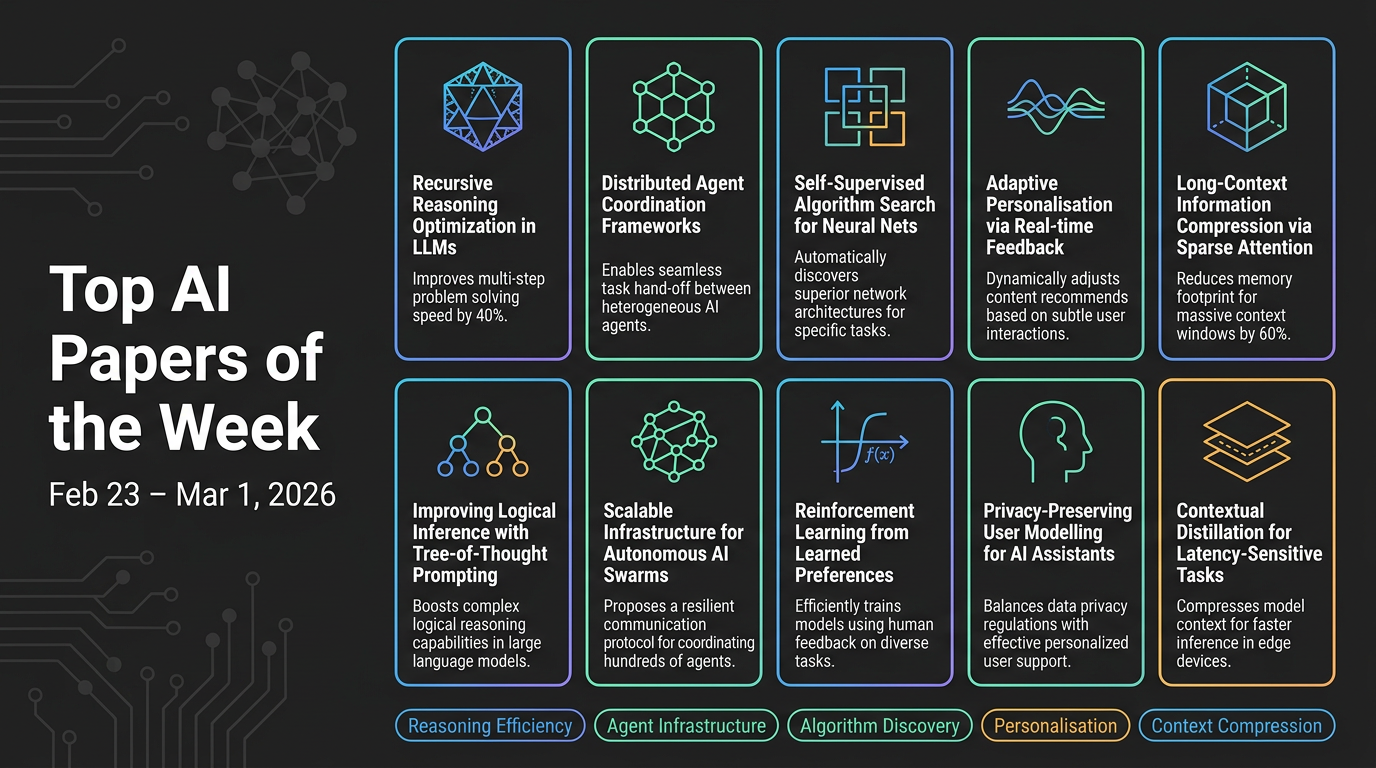

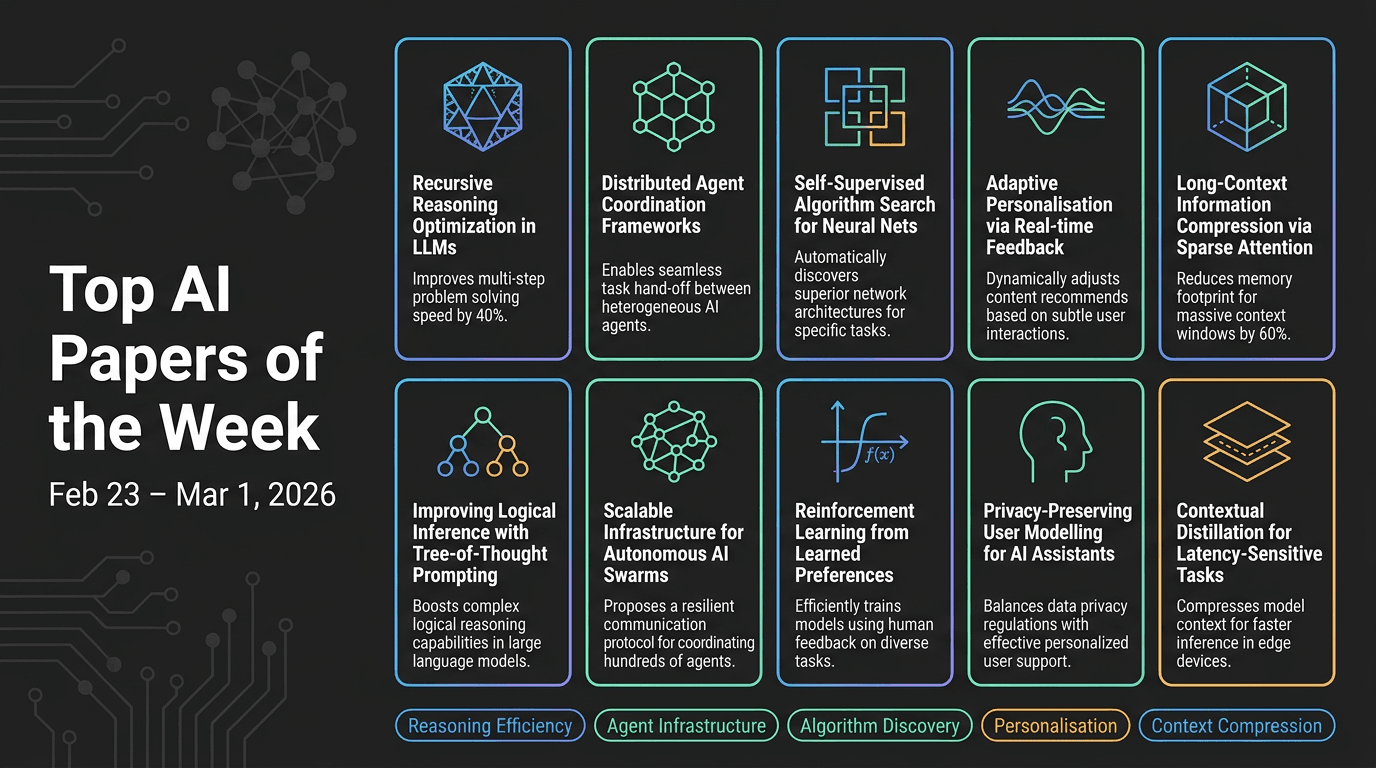

Top AI Papers of the Week

Top AI Papers of the Week (February 23 – March 1, 2026)

Elvis Saravia's weekly AI newsletter rounds up ten notable research papers spanning reasoning efficiency, agent infrastructure, algorithm discovery, personalization, context compression, and code generation. Here's a detailed breakdown:

1. Deep-Thinking Tokens

Google researchers argue that longer outputs don't equal better reasoning. They introduce deep-thinking tokens — tokens where internal model predictions shift significantly across layers before stabilising — measured via Jensen-Shannon divergence between intermediate and final layer distributions. A token qualifies as "deep-thinking" if its prediction only stabilises in the final 15% of layers.

- Raw token count negatively correlates with accuracy (r = -0.59), while the deep-thinking ratio shows a strong positive correlation (r = 0.683)

- Think@n: a test-time scaling strategy that prioritises high deep-thinking ratio samples, matching self-consistency performance while cutting inference costs ~50%

- Validated on AIME 24/25, HMMT 25, GPQA-diamond with GPT-OSS, DeepSeek-R1, Qwen3

- Takeaway: Focus on computational depth, not token volume

2. Codified Context

Single-file AGENTS.md manifests don't scale to large codebases. This paper presents a three-component infrastructure built during development of a 108,000-line C# distributed system, evaluated across 283 development sessions:

- Hot-memory constitution: A living document encoding conventions and orchestration protocols consulted at session start

- Domain-expert agents: 19 specialised agents, each owning a bounded codebase domain

- Cold-memory knowledge base: 34 on-demand specification documents retrieved only when needed

- Result: Prevents agents from forgetting conventions, repeating mistakes, and losing coherence across long-running projects

3. Discovering Multi-Agent Learning Algorithms with LLMs

Google DeepMind uses AlphaEvolve — an evolutionary coding agent powered by LLMs — to automatically discover new multi-agent learning algorithms for imperfect-information games.

- VAD-CFR: A novel iterative regret minimisation variant with volatility-sensitive discounting and consistency-enforced optimism — outperforms Discounted Predictive CFR+

- SHOR-PSRO: A population-based training variant blending Optimistic Regret Matching with temperature-controlled strategy distributions

- AlphaEvolve generates, evaluates, and iteratively refines algorithm candidates

- Takeaway: LLMs can act as algorithmic designers, not just code generators; approach could extend to optimisation, scheduling, and resource allocation

4. Evaluating AGENTS.md

A direct empirical evaluation of whether AGENTS.md files actually improve AI coding agent performance. Four agents tested: Claude Code (Sonnet-4.5), Codex (GPT-5.2 & GPT-5.1 mini), Qwen Code (Qwen3-30b-coder).

- Human-written AGENTS.md: modest +4% improvement in some cases

- LLM-generated AGENTS.md: -2% performance drop

- Both consistently increase inference cost by 20%+

- Context files cause broader exploration but worse outcomes — additional context introduces noise

- Takeaway: Keep AGENTS.md minimal and focused on critical constraints only; information density beats comprehensiveness

5. PAHF (Personalized Agents from Human Feedback)

Meta introduces PAHF, a continual agent personalisation framework coupling explicit per-user memory with proactive and reactive feedback mechanisms.

- Three-step loop: (1) pre-action clarification, (2) preference-grounded action, (3) post-action memory update

- Enables agents to accumulate and revise user preference profiles without retraining

- New benchmarks in embodied manipulation and online shopping measuring preference learning and adaptation to preference shifts

- Substantially faster learning and outperforms no-memory and single-channel baselines

6. Doc-to-LoRA

Sakana AI introduces Doc-to-LoRA (D2L), a lightweight hypernetwork that meta-learns to compress long documents into LoRA adapters in a single forward pass.

- Eliminates repeated expensive attention over long contexts; subsequent queries use only adapter weights

- Achieves near-perfect zero-shot accuracy on needle-in-a-haystack tasks at 4x beyond the target LLM's native context window

- Outperforms standard long-context approaches on QA datasets with less memory

- Best for: Applications requiring repeated queries over the same document (customer support, legal analysis, codebase understanding)

7. AgentConductor

AgentConductor is a RL-enhanced multi-agent code generation system that dynamically generates interaction topologies based on task complexity rather than using fixed communication patterns.

- LLM-based orchestrator constructs density-aware layered DAG topologies adapted to problem difficulty

- Simple problems → sparse topologies; complex problems → denser collaboration

- Results: Up to 14.6% improvement in pass@1 accuracy, 13% density reduction, 68% token cost reduction vs. strongest baseline

- Execution feedback refines topologies when initial solutions fail

8. ActionEngine

Georgia Tech and Microsoft Research introduce ActionEngine, a training-free framework transforming GUI agents from step-by-step executors into programmatic planners.

- Builds a state-machine memory through offline exploration

- Synthesises executable Python programs for task completion

- Achieves 95% success on Reddit tasks from WebArena

- Average of a single LLM call per task; 11.8x cost reduction, 2x latency reduction vs. vision-only baselines

9. CoT Faithfulness via REMUL

REMUL is a training approach making chain-of-thought reasoning more faithful and monitorable using a speaker-listener RL framework.

- A speaker model generates reasoning traces; multiple listener models attempt to follow and complete them

- RL rewards reasoning understandable to other models

- Tested on BIG-Bench Extra Hard, MuSR, ZebraLogicBench, FOLIO

- Improves three faithfulness metrics while boosting accuracy; produces shorter, more direct reasoning chains

10. Learning to Rewrite Tool Descriptions

Intuit AI Research introduces Trace-Free+, a curriculum learning framework that optimises tool descriptions for LLM agents (not humans) without relying on execution traces.

- Delivers consistent gains on unseen tools

- Strong cross-domain generalisation

- Robust as candidate tools scale beyond 100

- Takeaway: Improving tool interfaces is a practical complement to agent fine-tuning

Key Themes This Week

- Efficiency over verbosity: Deep-thinking tokens and AgentConductor both show that targeted computation beats brute-force scaling

- Context is a double-edged sword: AGENTS.md evaluation and Codified Context both highlight that more context isn't always better — structure and density matter

- LLMs as meta-designers: AlphaEvolve demonstrates LLMs discovering algorithms that humans hadn't considered

- Personalisation at scale: PAHF and Doc-to-LoRA both address how to make AI systems adapt to individual users and documents without prohibitive retraining costs