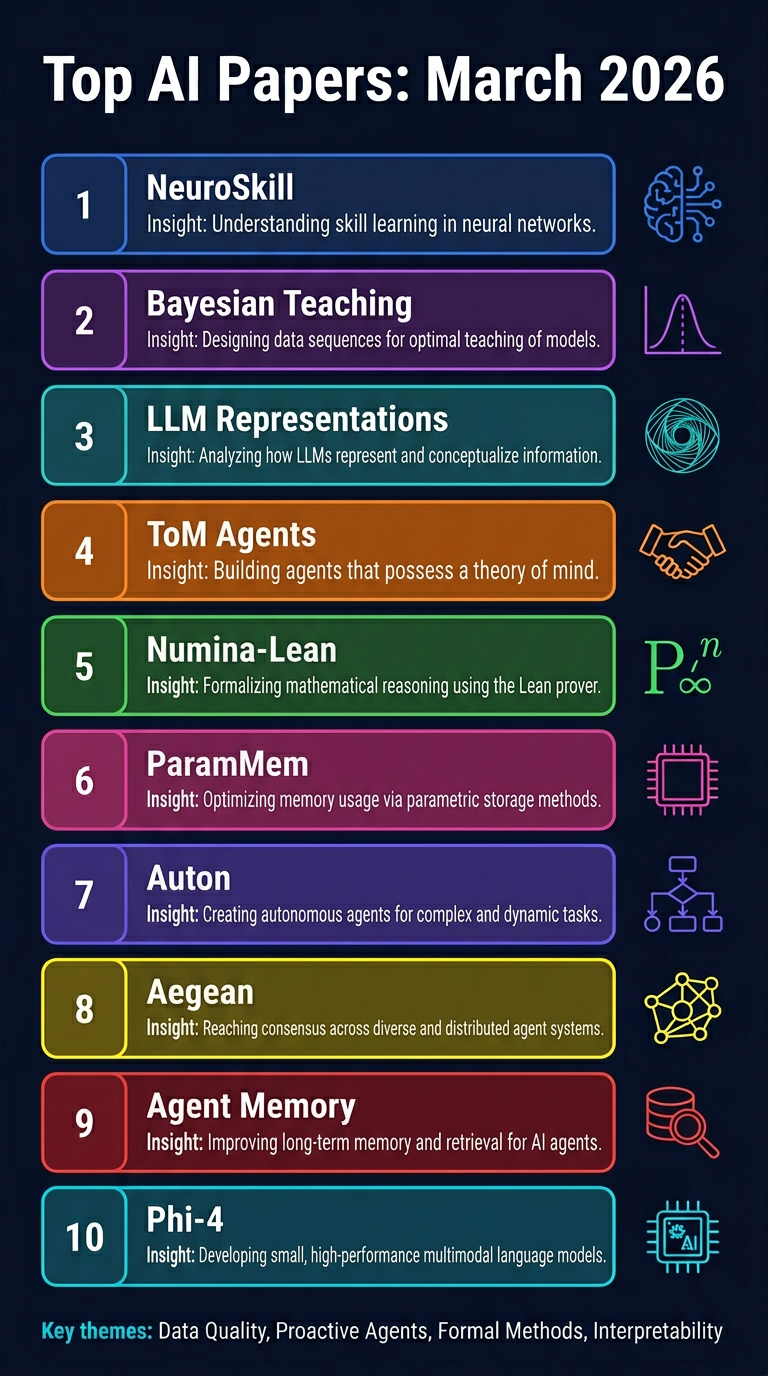

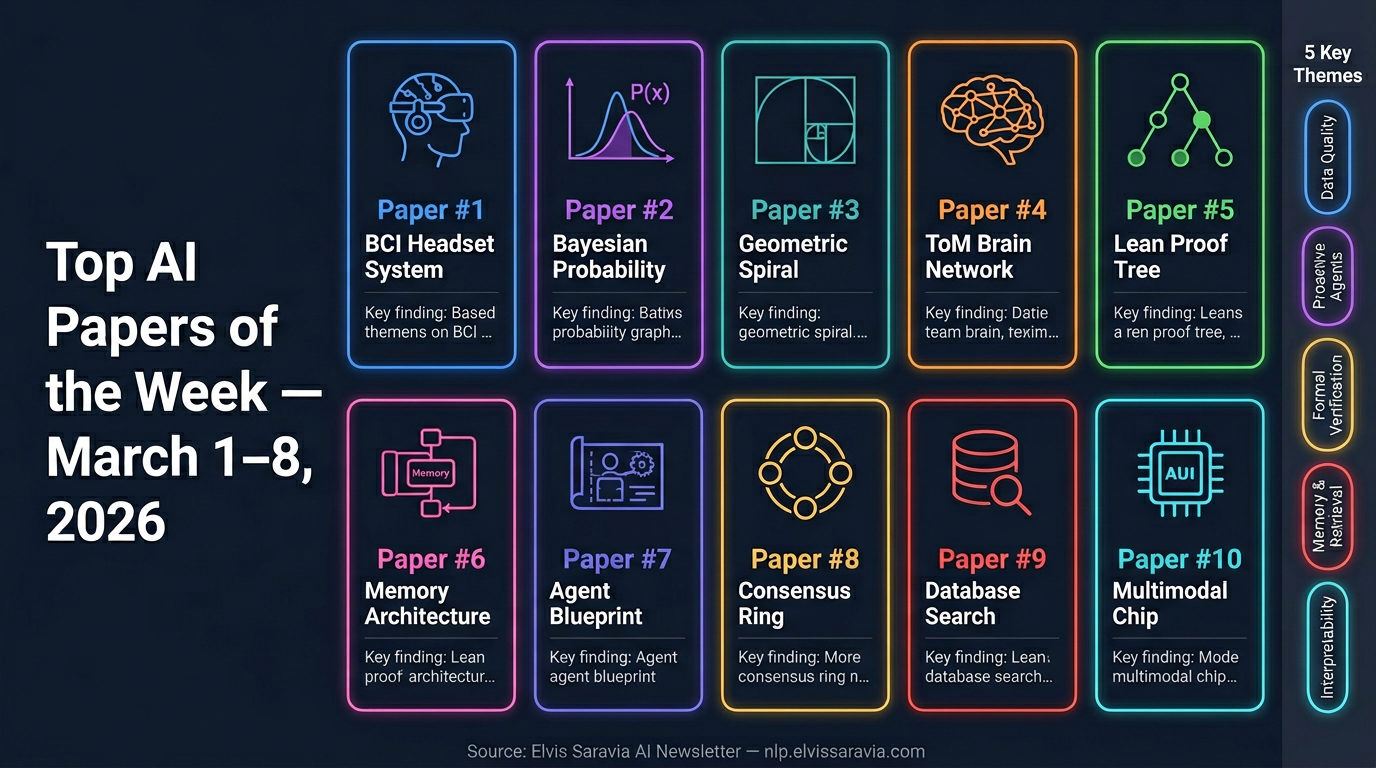

Top AI Papers of the Week

Top AI Papers of the Week (March 1–8, 2026)

From Elvis Saravia's AI Newsletter

This week's roundup covers 10 significant AI research papers spanning agentic systems, reasoning, memory, multi-agent coordination, and efficient models.

1. NeuroSkill — Brain-Computer Interface Meets Agentic AI

MIT researchers introduce NeuroSkill, a proactive agentic system that reads BCI (Brain-Computer Interface) signals to anticipate user needs in real time — before the user explicitly asks for help.

- NeuroLoop is the core agentic flow: it processes EXG brain signals, converts them to state-of-mind descriptions, and triggers tool calls accordingly

- Detects cognitive overload, confusion, or emotional shifts autonomously

- Runs fully offline on edge devices — no cloud dependency, preserving privacy and minimising latency

- Released open-source under GPLv3 + AI100 ethical licensing

Takeaway: The shift from reactive to proactive AI agents could redefine human-computer interaction, especially in accessibility and cognitive assistance.

2. Bayesian Teaching for LLMs — Teaching Models to Reason Probabilistically

Google researchers demonstrate that LLMs can be fine-tuned to reason like Bayesians by training on synthetic interactions with an idealised Bayesian Assistant.

- LLMs typically suffer from base rate neglect and conservatism — this approach substantially reduces both

- Models generalise Bayesian reasoning to entirely new task types not seen during training

- Key finding: data quality beats model scale — a smaller model trained on Bayesian interactions outperforms larger models reasoning from scratch

- No architectural changes required

Takeaway: Carefully curated synthetic training data can instil transferable reasoning capabilities that raw scale cannot.

3. Why LLMs Form Geometric Representations

This paper provides a mathematical proof for why LLMs spontaneously organise internal representations into geometric structures: months form circles, years form spirals, spatial coordinates align to manifolds.

- Root cause: translation symmetry in natural language co-occurrence statistics forces circular geometry for cyclic concepts

- Spirals and rippled manifolds emerge for continuous concepts (e.g. historical years, number lines)

- The geometry is analytically derived, not merely observed post-hoc

- Universal across model architectures and sizes

Takeaway: LLM representations are not arbitrary — they reflect deep statistical structure in language, with implications for interpretability.

4. Theory of Mind in Multi-Agent LLMs — More Mechanism Isn't Always Better

Researchers evaluate a multi-agent architecture combining Theory of Mind (ToM), BDI (Belief-Desire-Intention) models, and symbolic solvers on resource allocation tasks.

- Counterintuitive finding: adding cognitive mechanisms does not automatically improve coordination

- Stronger LLMs benefit from ToM; weaker models are confused by the added reasoning overhead

- Symbolic verification helps ground decisions in formal constraints

Takeaway: Match cognitive complexity to model capability. Adding ToM to an underpowered model can actively hurt performance.

5. Numina-Lean-Agent — General Coding Agents for Formal Theorem Proving

Numina-Lean-Agent uses a general-purpose coding agent (Claude) plus Model Context Protocol (MCP) tools to autonomously interact with the Lean proof assistant.

- Solves all 12 Putnam 2025 problems using Claude Opus 4.5 — matching the best closed-source systems

- Successfully formalised the Brascamp-Lieb theorem in collaboration with mathematicians

- No expensive task-specific training; performance improves simply by upgrading the base model

- MCP tools used: Lean-LSP-MCP, LeanDex (semantic theorem retrieval), informal prover

- Released open-source under Creative Commons BY 4.0

Takeaway: General agents with the right tools can match specialised theorem-proving systems, democratising formal mathematics.

6. ParamMem — Fixing Repetitive Self-Reflection in LLM Agents

ParamMem addresses a critical flaw in self-reflective agents: standard reflection generates near-identical outputs across iterations, adding noise rather than useful signal.

- Introduces a parametric memory module encoding cross-sample reflection patterns into model weights

- Three-tier memory: parametric (cross-sample patterns), episodic (individual task), cross-sample (global strategies)

- Diversity of reflection strongly correlates with task success

- Weak-to-strong transfer: reflection patterns from smaller models can be applied to larger ones

- Outperforms baselines on code generation, mathematical reasoning, and multi-hop QA

Takeaway: Diverse, structured self-reflection is more valuable than repetitive iteration. Memory architecture matters as much as model size.

7. Auton — Declarative Framework for Autonomous Agent Governance

Snap Research introduces Auton, a declarative architecture that separates agent specification from runtime execution to bridge the gap between stochastic LLM outputs and deterministic backend infrastructure.

- Cognitive Blueprint: language-agnostic, auditable declaration of agent identity and capabilities

- Agent execution formalised as an augmented POMDP with a latent reasoning space

- Biologically-inspired hierarchical memory consolidation for long-term retention

- Runtime optimisations: parallel graph execution, speculative inference, dynamic context pruning

- Safety enforced via constraint manifold formalism (policy projection, not post-hoc filtering)

Takeaway: Declarative agent specification enables auditability, portability, and safer deployment of autonomous systems.

8. Aegean — Consensus Protocol for Multi-Agent LLM Workflows

Aegean reframes multi-agent refinement as a distributed consensus problem, enabling early termination when enough agents converge on an answer.

- Achieves 1.2–20x latency reduction across four mathematical reasoning benchmarks

- Maintains answer quality within 2.5% of exhaustive approaches

- Consensus-aware serving engine performs incremental quorum detection, eliminating wasted compute on stragglers

Takeaway: Treating multi-agent agreement as a consensus protocol can dramatically cut inference costs without sacrificing quality.

9. Diagnosing Agent Memory — Retrieval Is the Bottleneck

This paper introduces a diagnostic framework separating retrieval failures from utilisation failures in LLM agent memory systems.

- 3×3 factorial study crossing three write strategies with three retrieval methods

- Retrieval is the dominant bottleneck: accounts for 11–46% of errors

- Utilisation failures remain stable at 4–8% regardless of configuration

- Hybrid reranking cuts retrieval failures roughly in half — larger gains than any write strategy optimisation

Takeaway: Before optimising how agents write memories, fix how they retrieve them. Retrieval quality is the primary lever for memory performance.

10. Phi-4-reasoning-vision-15B — Compact Multimodal Reasoning

Microsoft releases Phi-4-reasoning-vision-15B, a compact open-weight multimodal model combining visual understanding with structured reasoning.

- Trained on only 200 billion tokens of multimodal data

- Excels at math, science reasoning, and UI comprehension

- Requires significantly less compute than comparable open-weight vision-language models

- Key insight: systematic filtering, error correction, and synthetic augmentation are the primary performance levers — pushing the accuracy-compute Pareto frontier

Takeaway: Efficient multimodal reasoning is achievable at smaller scales with the right data curation strategy.

Key Themes This Week

| Theme | Papers |

| Data quality > scale | Bayesian Teaching, Phi-4-reasoning-vision |

| Proactive & cognitive agents | NeuroSkill, ToM Multi-Agent, ParamMem |

| Formal methods meet LLMs | Numina-Lean-Agent, Auton, Aegean |

| Interpretability & structure | Geometric Representations |

| Memory & retrieval | ParamMem, Diagnosing Agent Memory |

Note: ArXiv links are approximate based on available metadata. Verify on arxiv.org for final paper IDs.