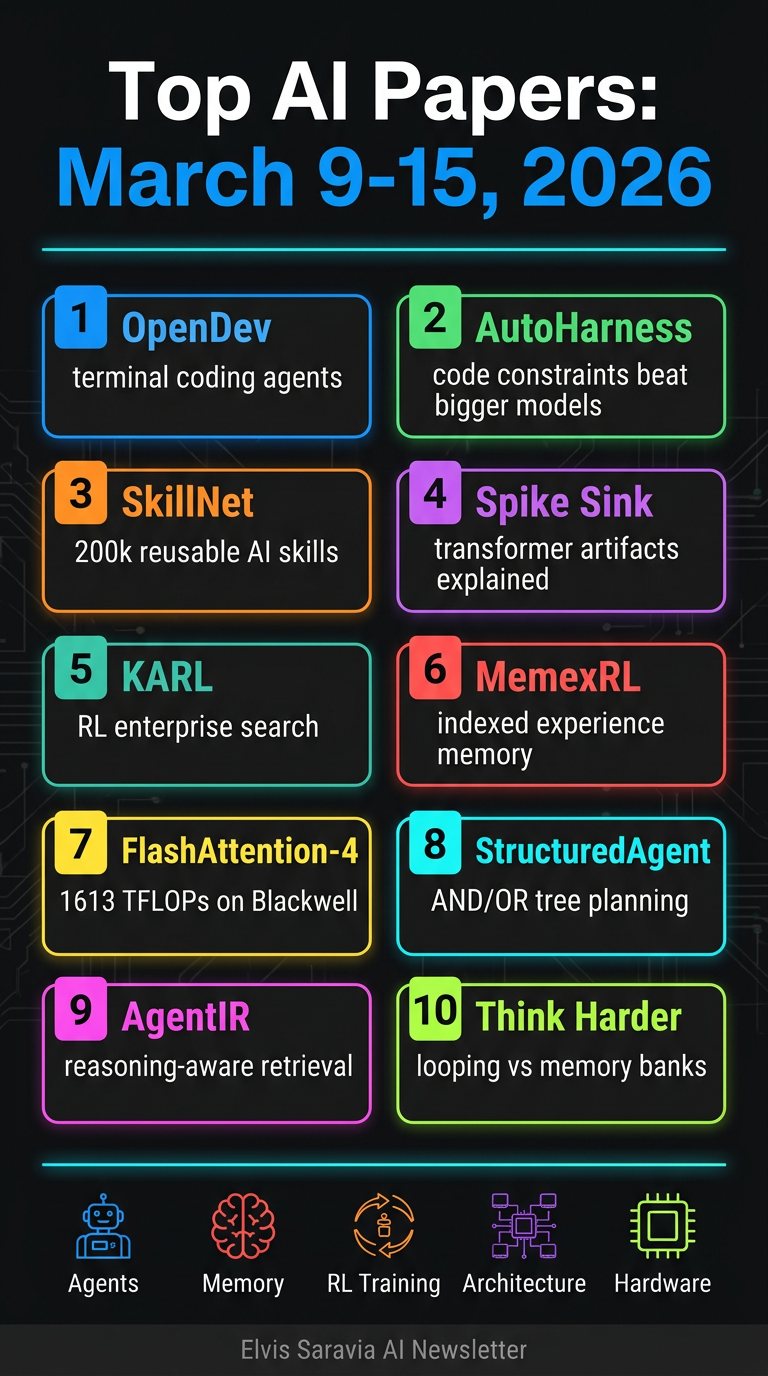

Top AI Papers of the Week

Top AI Papers of the Week (March 9–15, 2026)

Elvis Saravia's AI Newsletter rounds up ten significant AI research papers spanning coding agents, LLM efficiency, reinforcement learning, memory systems, and hardware optimisation. Here's a detailed breakdown:

1. OpenDev — Terminal-Native Coding Agents

OpenDev is an open-source, command-line coding agent with an 81-page technical report covering scaffolding, harness design, and context engineering.

Key findings:

- Dual-agent architecture separates planning from execution using workload-specialised model routing

- Adaptive context compaction uses lazy tool discovery to prevent context bloat and reasoning degradation

- Automated project memory retains critical project context across sessions without manual intervention

- Four-layer architecture spans reasoning, context engineering, tooling, and persistence layers

Takeaway: Terminal-first AI agents can be made production-ready through modular design and intelligent context management rather than brute-force compute.

2. AutoHarness — Automatic Code Harness Synthesis for LLM Agents

Google DeepMind researchers tackle a striking problem: in the Kaggle GameArena chess competition, 78% of Gemini-2.5-Flash losses were due to illegal moves, not poor strategy.

Key findings:

- AutoHarness uses Gemini-2.5-Flash to automatically synthesise a code harness through iterative refinement with environment feedback

- The harness acts as a programmatic constraint layer, completely preventing illegal moves across 145 TextArena games

- Smaller models beat larger ones: Gemini-2.5-Flash with the harness outperforms Gemini-2.5-Pro and GPT-5.2-High on 16 single-player games

- The approach is more cost-effective than scaling to a larger model

Takeaway: Structured code constraints can substitute for raw model capability, reframing agent improvement as harness engineering rather than model scaling.

3. SkillNet — Open Infrastructure for Reusable AI Skills

SkillNet addresses the problem of AI agents repeatedly rediscovering solutions instead of systematically reusing learned knowledge.

Key findings:

- Unified skill ontology structures skills from heterogeneous sources (code libraries, prompt templates, tool compositions) with rich relational connections

- Multi-dimensional evaluation across five dimensions: Safety, Completeness, Executability, Maintainability, and Cost-awareness

- 200,000+ skill repository with interactive browsing, management platform, and Python toolkit

- Experiments on ALFWorld, WebShop, and ScienceWorld show 40% improvement in average rewards and 30% reduction in execution steps

Takeaway: Building a structured, quality-gated skill library at scale dramatically outperforms agents that start from scratch on each task.

4. The Spike, the Sparse and the Sink — Transformer Phenomena Dissected

Yann LeCun and NYU collaborators analyse two recurring Transformer phenomena: massive activations (extreme outliers in specific channels) and attention sinks (tokens attracting disproportionate attention regardless of semantic relevance).

Key findings:

- Massive activations operate globally, inducing near-constant hidden representations acting as implicit model parameters

- Attention sinks operate locally, biasing individual attention heads toward short-range dependencies

- Pre-norm configuration is identified as the key architectural element enabling their co-occurrence

- Removing pre-norm causes the two phenomena to decouple entirely

- Their co-occurrence is a design-dependent artifact, not a fundamental requirement for performance

Takeaway: Understanding these phenomena is critical for model compression, quantisation, and KV-cache optimisation — many efficiency techniques fail silently by disrupting them.

5. KARL — Reinforcement Learning for Enterprise Search Agents

Databricks presents KARL, achieving state-of-the-art performance on hard-to-verify agentic search tasks, alongside the new KARLBench evaluation framework spanning six search domains.

Key findings:

- OAPL (Off-policy Agentic Policy Learning): iterative large-batch off-policy RL robust to discrepancies between trainer and inference engine without clipped importance weighting heuristics

- Multi-task heterogeneous training across constraint-driven entity search, cross-document synthesis, tabular reasoning, entity retrieval, procedural reasoning, and fact aggregation

- Pareto-optimal on KARLBench vs. Claude 4.6 and GPT 5.2 across cost-quality and latency-quality tradeoffs

- KARL-BCP achieves 59.6 on BrowseComp-Plus, improving to 70.4 with value-guided search; KARL-TREC reaches 85.0 on TREC-Biogen

Takeaway: Multi-task RL training with off-policy robustness produces search agents that outperform closed frontier models given sufficient test-time compute.

6. Memex(RL) — Indexed Experience Memory for Long-Horizon Tasks

Memex(RL) solves the problem of LLM agents losing track of learned information as tasks grow longer and more complex.

Key findings:

- Indexed experience memory: compact working context with structured summaries and stable indices, with full-fidelity interactions stored in an external database

- The agent learns what to summarise, what to archive, how to index, and when to retrieve through RL optimisation

- RL-optimised memory operations with reward shaping tailored to indexed memory usage under a context budget

- Theoretical guarantee: bounded retrieval complexity maintains decision quality as task history grows

- Empirically achieves better task success rates with significantly smaller working context than baselines

Takeaway: Intelligent indexed memory management outperforms brute-force context expansion — less context used more strategically wins.

7. FlashAttention-4 — Co-Designed for Blackwell GPUs

FlashAttention-4 co-designs algorithms and kernel pipelines specifically for NVIDIA B200 and GB200 GPUs, where tensor core throughput doubles while other units scale more slowly.

Key findings:

- Achieves up to 1.3x speedup over cuDNN 9.13 and 2.7x over Triton on B200 GPUs in BF16, reaching 1613 TFLOPs/s at 71% hardware utilisation

- Techniques: fully asynchronous matrix multiply pipelines, larger tile sizes, software-emulated exponential and conditional softmax rescaling, tensor memory to reduce shared memory traffic

- Python-native CuTe-DSL implementation achieves 20-30x faster compile times than C++ template approaches

- Demonstrates that Blackwell GPUs require fundamentally new kernel designs, not incremental optimisation of Hopper-era techniques

Takeaway: Hardware-algorithm co-design is essential for next-generation GPUs — existing attention kernels leave significant performance on the table.

8. STRUCTUREDAGENT — Hierarchical Planning for Web Tasks

STRUCTUREDAGENT introduces hierarchical planning for long-horizon web tasks using dynamic AND/OR trees, separating tree construction from LLM invocation (used only for local node expansion or repair).

Key findings:

- Dynamic AND/OR tree structure enables interpretable hierarchical plans for easier debugging and human intervention

- A structured memory module tracks candidate solutions to improve constraint satisfaction

- Shows improved performance over standard LLM-based web agents on WebVoyager, WebArena, and custom shopping benchmarks

Takeaway: Separating planning structure from LLM calls reduces hallucination risk and improves interpretability in complex web navigation tasks.

9. AgentIR — Reasoning-Aware Retrieval for Deep Research Agents

AgentIR addresses the fact that deep research agents generate rich reasoning traces before search calls, but existing retrievers completely ignore these signals.

Key findings:

- Reasoning-aware retrieval jointly embeds the agent's reasoning trace alongside its query

- DR-Synth synthesises training data from standard QA datasets

- AgentIR-4B achieves 68% accuracy on BrowseComp-Plus with Tongyi-DeepResearch vs. 50% with conventional embedding models twice its size and 37% with BM25

Takeaway: Leveraging reasoning traces as retrieval signals provides dramatic accuracy gains over query-only retrieval, even with much smaller models.

10. Think Harder or Know More — Adaptive Looping vs. Memory Banks

This paper investigates transformers with both adaptive per-layer looping (learned halting mechanism) and gated memory banks (additional learned storage).

Key findings:

- Looping primarily benefits mathematical reasoning; memory banks help recover commonsense task performance

- Combining both outperforms an iso-FLOP baseline with 3x the number of layers on math benchmarks

- Layer specialisation analysis: early layers loop minimally and access memory sparingly; later layers do both more heavily

Takeaway: Compute reuse (looping) and knowledge storage (memory banks) address complementary cognitive needs — combining them is more efficient than simply adding more layers.

Overall Themes This Week

| Theme | Papers |

| Agentic coding & task execution | OpenDev, AutoHarness, STRUCTUREDAGENT |

| Memory & context management | Memex(RL), SkillNet, AgentIR |

| Training & RL for agents | KARL, Think Harder or Know More |

| Transformer architecture analysis | The Spike the Sparse and the Sink |

| Hardware optimisation | FlashAttention-4 |

The week's dominant narrative: AI agents are maturing beyond raw LLM capability — structured constraints, intelligent memory, skill reuse, and hardware-aware design are emerging as the key levers for production-grade AI systems.