LLM Watch Weekly: When Scale Isn't Enough (Feb 27 2026)

Originally published on LLM Watch by Pascal Biese — February 27, 2026.

Summary

This week's LLM Watch Weekly digs into three key papers that challenge conventional assumptions about scaling, fine-tuning, and RAG conversations — plus a selection of specialised benchmarks. The central theme: bigger isn't always better.

Scale Can't Overcome Pragmatics: Reporting Bias in Vision-Language Models

Scale Can't Overcome Pragmatics — The paper challenges the assumption that reasoning capabilities like counting, spatial relationships, negation, and temporal reasoning will emerge with scale.

The core problem: Humans don't caption images with information that's "visually obvious." A photo captioned "at the game today!" doesn't include counts, spatial prepositions, or temporal details — because the communicative purpose of captions is to add context, not describe the obvious. This "reporting bias" means even web-scale datasets systematically lack the annotations needed to supervise these reasoning skills.

Key findings:

- Counting information appeared in fewer than 8% of captions across datasets

- Spatial prepositions beyond "on" and "in" were rare

- Testing across OpenCLIP, LLaVA-1.5, and Molmo confirmed: scaling along data size, model size, and language diversity did not help

- But targeted annotation collection of tacit information substantially improved performance

Takeaway: If your application requires counting, spatial reasoning, or negation, expect failures regardless of model size. The fix is targeted data curation, not larger models.

Fine-Tuning Without Forgetting In-Context Learning

Fine-Tuning Without Forgetting ICL — Addresses the classic trade-off: fine-tuning improves zero-shot performance but degrades in-context learning (few-shot) ability.

The insight: Decomposing attention into query/key projections (which control where to look) vs. value matrices (which control what to extract). When you fine-tune all parameters, the optimization corrupts the representations that enable in-context learning. But restricting updates to only the value matrix preserves ICL while still achieving zero-shot improvements.

Key findings:

- Value-matrix-only fine-tuning preserved 94% of original few-shot performance

- Full parameter fine-tuning reduced few-shot accuracy by 23-31%

- An auxiliary few-shot loss improved ICL on the fine-tuning task by 12% but degraded held-out tasks by 8-15%

Takeaway: Freeze query and key projections; update only value matrices. Easy to implement in standard training frameworks, no architectural changes needed.

MTRAG-UN: Multi-Turn RAG Conversations in the Wild

MTRAG-UN — A benchmark of 666 tasks, 2,800+ conversation turns across six domains, specifically targeting real-world RAG failure modes.

The failure modes under test:

- Unanswerable questions (corpus lacks the answer)

- Underspecified questions (multiple valid interpretations)

- Non-standalone questions (require conversational context)

- Unclear responses (ambiguous or incomplete outputs)

Key findings:

- Even best models correctly identified unanswerable questions only 38% of the time — the rest hallucinated

- Retrieval accuracy on non-standalone questions: 41% vs. 73% on standalone — a 32 percentage point drop

- Models asked for clarification on underspecified questions only 11% of the time (defaulting to one interpretation without acknowledging ambiguity in 89% of cases)

- By the 5th conversation turn, retrieval accuracy had dropped 18 percentage points from turn 1

Takeaway: Single-turn benchmark performance is deeply misleading for conversational RAG. Design explicitly for unanswerable detection, clarification requests, and context compression.

Additional Papers This Week

SC-Arena — Benchmark for single-cell biology reasoning. Models achieve 78% on cell type annotation but only 34% on perturbation prediction — confirming a pattern/mechanism split: models learn to recognize, not understand causally.

PATRA — Pattern-aware alignment for time series QA. Current text/image representations miss the structural patterns experts actually use.

pMoE — Prompting diverse MoE experts together for better visual adaptation.

MM-NeuroOnco — Multimodal benchmark for MRI-based brain tumor diagnosis.

SPM-Bench — Benchmarking LLMs for scanning probe microscopy.

SOTAlign — Semi-supervised alignment of vision and language models via optimal transport.

RhythmBERT — Self-supervised language model on ECG waveforms for heart disease detection.

InnerQ — Hardware-aware tuning-free KV cache quantization for LLMs.

Key Takeaways

- Scaling doesn't fix data bias: Reporting bias in training data is a structural problem; targeted curation beats more of the same biased data

- Fine-tune smarter: Freeze Q/K projections, only update value matrices to preserve few-shot flexibility

- Conversational RAG is broken: Single-turn benchmarks hide catastrophic failure rates on realistic multi-turn interactions

- Pattern ≠ mechanism: Models recognize patterns well; causal/mechanistic reasoning remains deeply limited across domains (VLMs, biology, time series)

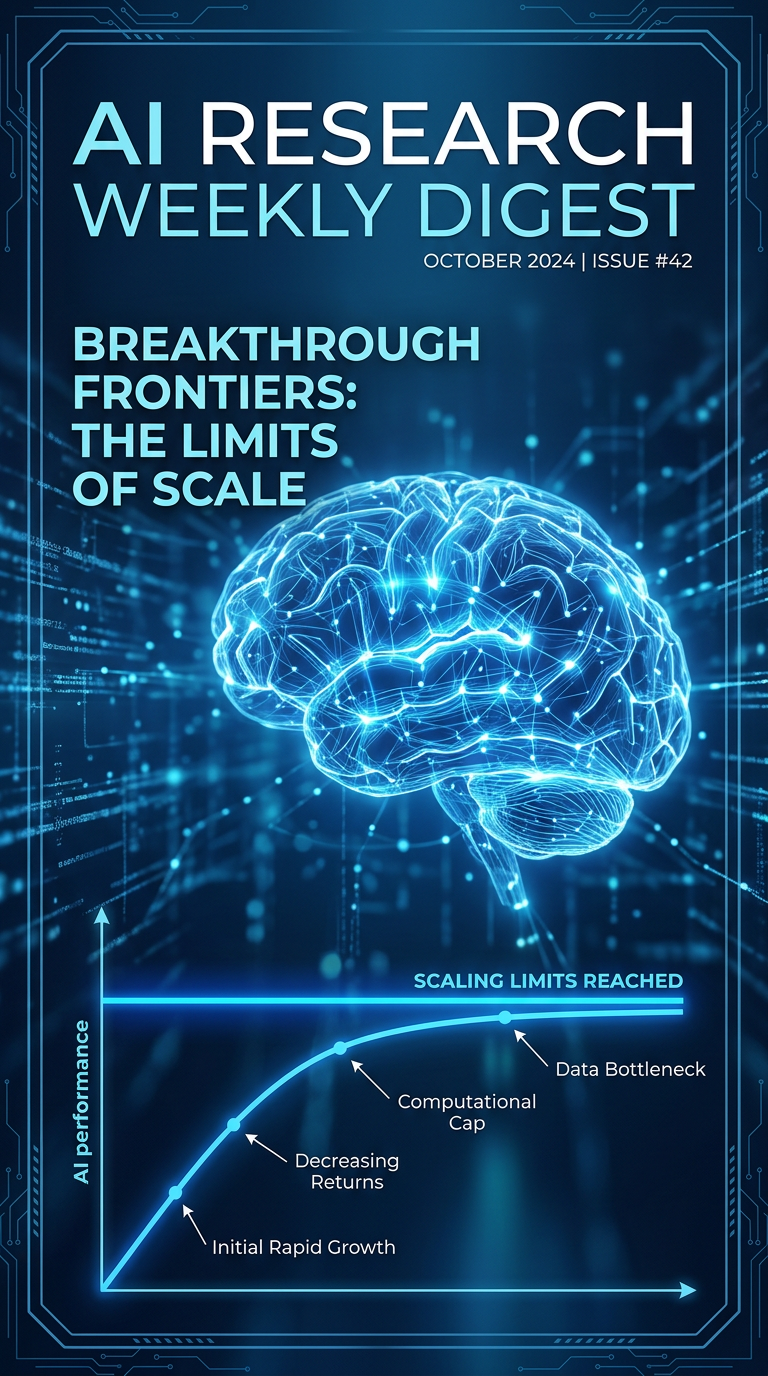

Infographics