Does Agents Actually Help Coding

Does AGENTS.md Actually Help Coding Agents? A New Study Has Answers

Summary of Elvis Saravia's AI Newsletter, Feb 26, 2026

Main Thesis

Developers widely assume that repository-level context files — CLAUDE.md, AGENTS.md, CONTRIBUTING.md — make coding agents meaningfully better. A new paper from ETH Zurich's SRI Lab puts that assumption to a rigorous empirical test, and the results are more nuanced than most practitioners expect.

Paper: Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?

Background: The Problem

- Context files have proliferated alongside coding agents, but adoption has outpaced evaluation — developers write them, agents read them, and everyone assumed the relationship was positive.

- Standard benchmarks like SWE-bench mostly cover popular repositories, which tend not to have context files, making them a poor testbed for this question.

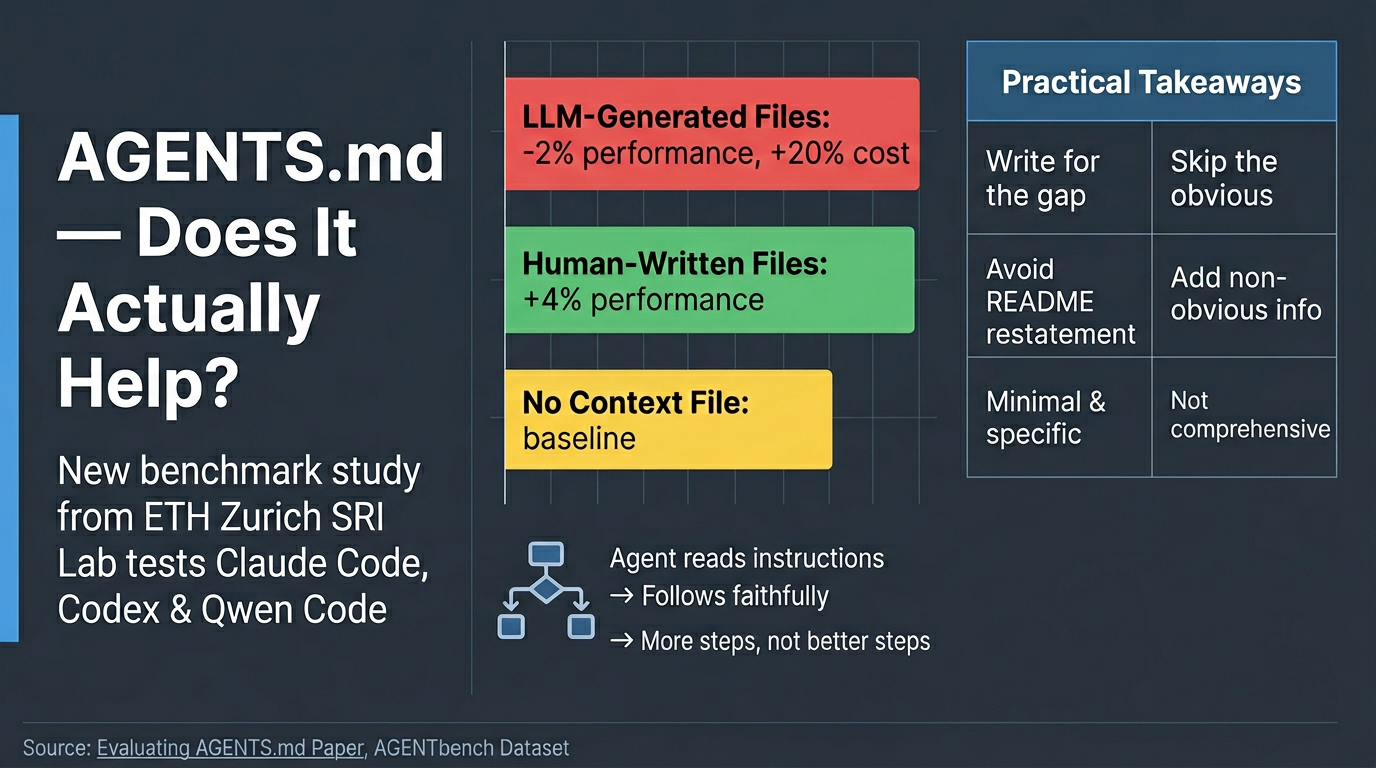

The New Benchmark: AGENTbench

- The paper introduces AGENTbench: 138 task instances from 12 less-popular Python repositories, all of which already have developer-written context files.

- Context files in AGENTbench average 641 words across 9.7 sections — detailed, real-world guidance, not trivial one-liners.

- Three agents were tested: Claude Code (Sonnet-4.5), Codex (GPT-5.2 / GPT-5.1 mini), and Qwen Code (Qwen3-30b-coder).

- Each agent ran tasks under three conditions: no context file, LLM-generated context file, and developer-written context file.

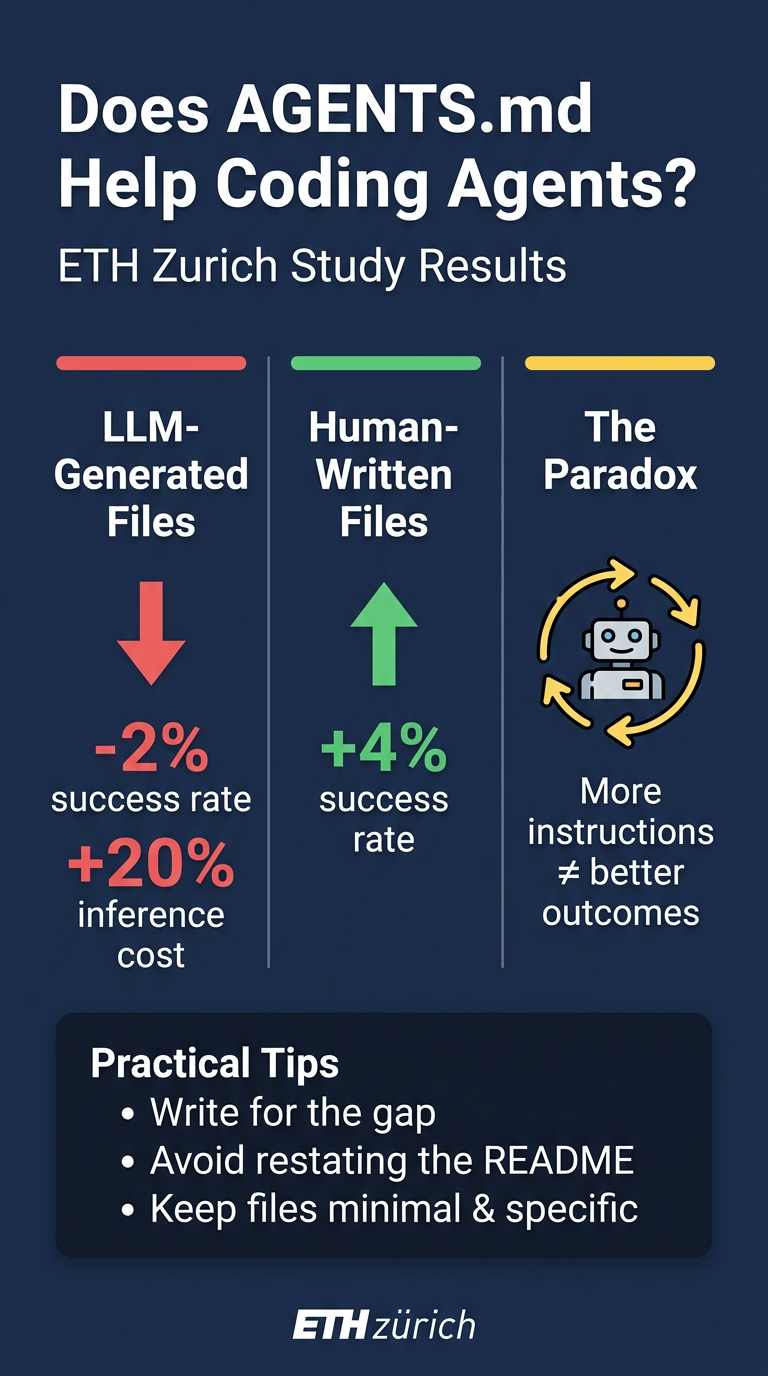

Key Findings

🔴 LLM-Generated Context Files Hurt Performance

- On SWE-bench Lite: LLM-generated files drop task success by ~0.5%.

- On AGENTbench: the drop is ~2%.

- Across all conditions, context files increase inference cost by 14–22% more reasoning tokens and 2–4 additional steps per task — regardless of whether they help.

🟢 Human-Written Context Files Help (On Their Own Turf)

- Human-written files produce a ~4% improvement over no context on average across both benchmarks.

- The gain is real, but it is benchmark- and file-quality-dependent.

⚡ The Instruction-Following Paradox

- Agents follow context file instructions faithfully: when

uvis mentioned, usage jumps to 1.6× per instance vs. fewer than 0.01× without it. - But more instruction-following ≠ better outcomes. Agents explore more, run more tests, traverse more files — without meaningfully reaching the right code faster.

- "A map of the whole city doesn't tell you which building to walk into."

🔍 Why Human Files Win: The Redundancy Problem

- LLM-generated files tend to restate information already in READMEs and docs — additive noise, not additive value.

- When existing documentation was removed before generating context files, LLM-generated files improved by 2.7% and actually outperformed human-written ones.

- Human-written files capture non-obvious, non-redundant information: quirky CI setups, non-default tooling choices, undocumented conventions.

Limitations

- Study limited to Python repositories — generalisability to TypeScript, Rust, multi-language codebases is unknown.

- Only measures issue resolution success, not security, consistency, or convention adherence.

- No longitudinal data on how context file quality or agent utilisation evolves over time.

Practical Takeaways

| Principle | Detail |

| Write for the gap | Only encode what the repo doesn't already explain — non-default tool choices, unusual test configs, hidden constraints. |

| Avoid restating the README | A CLAUDE.md that duplicates existing docs likely hurts more than it helps. |

| Respect the cost floor | Every context file adds ~20% to inference cost. High-volume pipelines should weigh this carefully. |

| Fix LLM-generated files | Auto-generators should be designed to explicitly avoid restating existing docs and focus on extracting non-obvious conventions. |

| Keep files minimal and specific | Less is more — specificity beats comprehensiveness. |

Bottom Line

Context files are not magic, but not useless. Human-written, specific, non-redundant files improve agent performance. Auto-generated files that recycle existing documentation actively reduce it. In both cases, the mechanism is the same: agents follow instructions, and outcome quality depends entirely on instruction quality. Getting this balance right is both a context file design problem and a model training problem.

Resources

- Paper: Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?

- AGENTbench Dataset