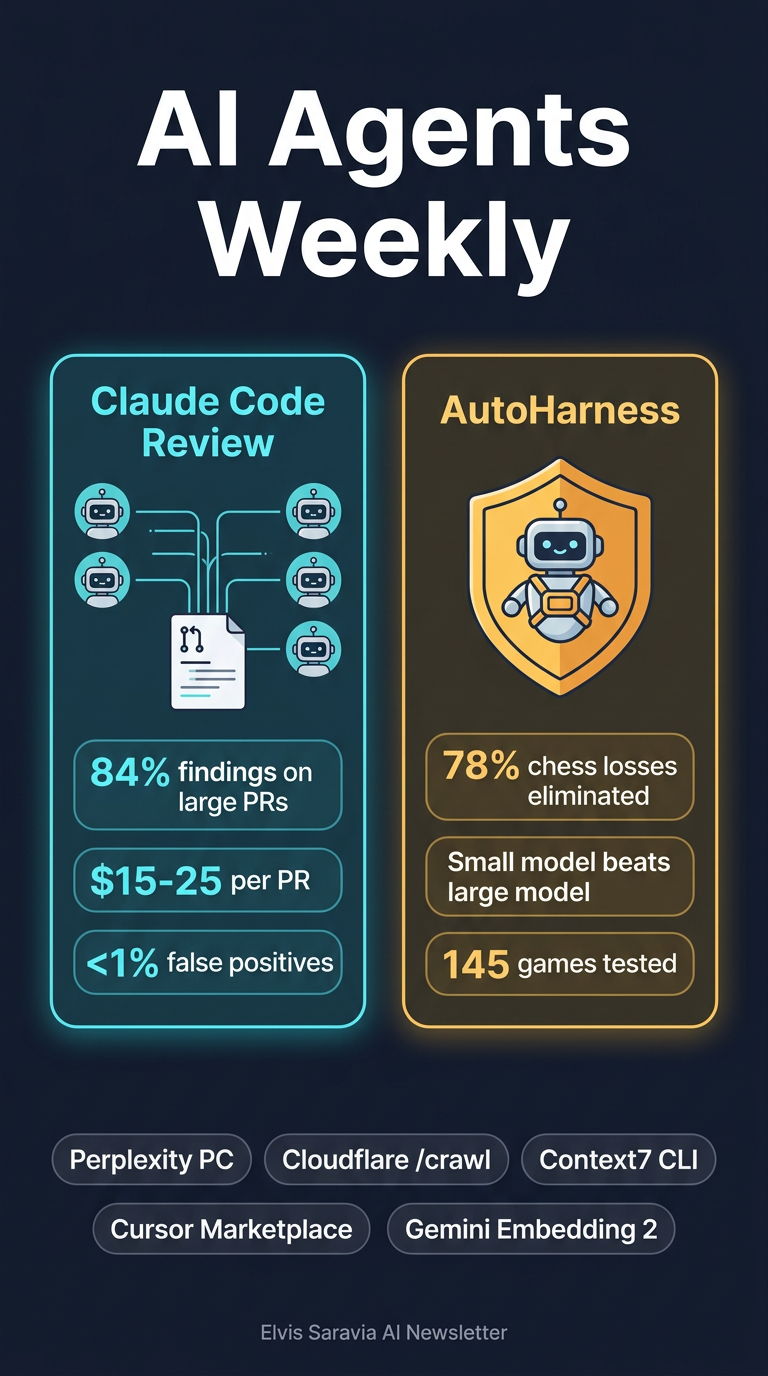

AI Agents Weekly - Claude Code Review

AI Agents Weekly: Claude Code Review, AutoHarness & More

From Elvis Saravia's AI Newsletter — March 14, 2026

Main Thesis

This issue highlights a wave of practical AI agent tooling shipping across the industry, with a particular focus on multi-agent code review and automated safety constraint synthesis — two patterns that point toward more reliable, production-ready AI agent deployments.

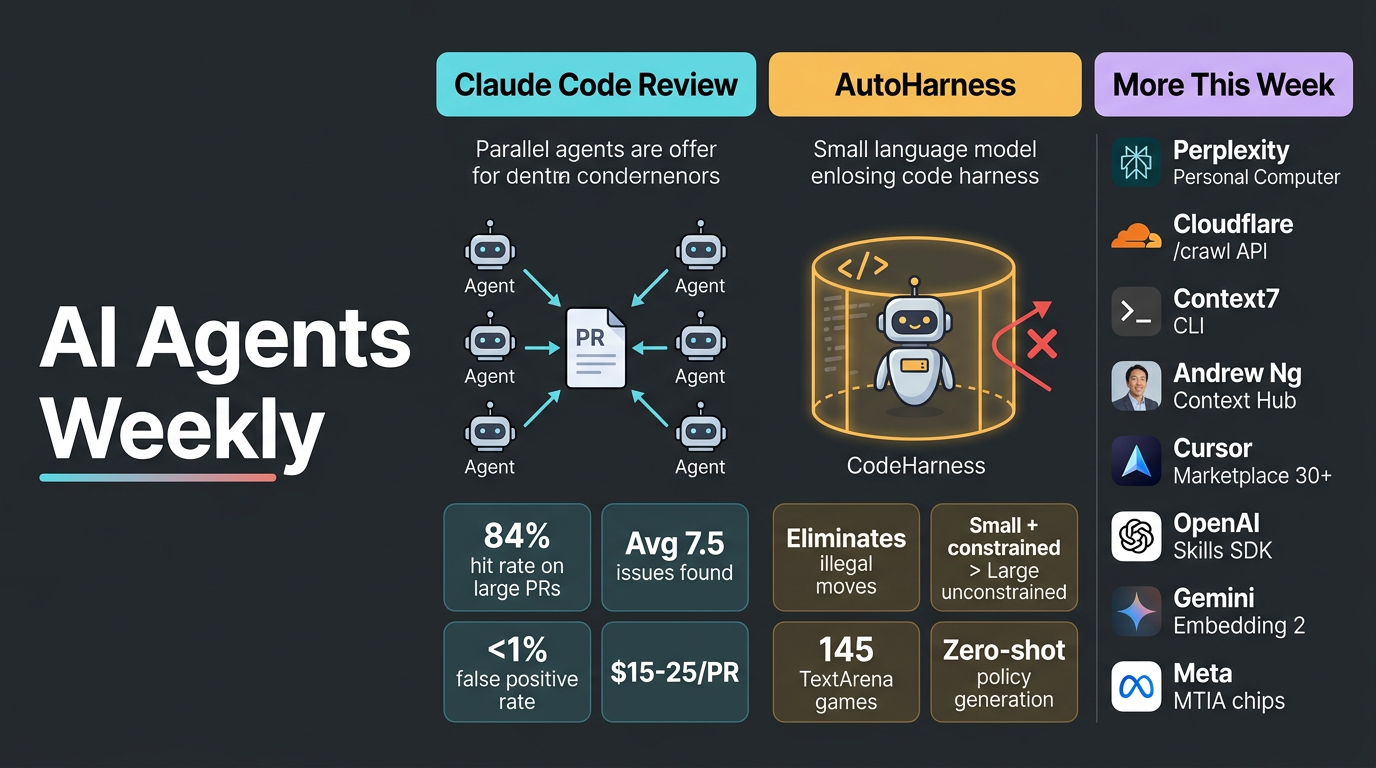

🔍 Top Story 1: Claude Code Review (Anthropic)

Anthropic launched Code Review for Claude Code, an automated multi-agent system that analyses every pull request using parallel AI agents.

How It Works

- Multiple agents run in parallel to scan, verify findings, and prioritise issues by severity

- Produces both a summary overview comment and targeted inline annotations

- Verification step actively eliminates false positives before surfacing results

Key Findings

- Large PRs (1,000+ lines): Findings 84% of the time, averaging 7.5 issues per PR

- Small PRs (<50 lines): Findings 31% of the time

- <1% of flagged issues were marked incorrect by Anthropic engineers

- Has caught production-critical bugs that appeared routine in diffs

Practical Takeaways

- Available as a research preview for Team and Enterprise customers

- Costs $15–25 per PR, billed on token usage

- Configurable monthly caps and per-repo controls give teams budget guardrails

- The parallel verify-then-rank architecture is a reusable pattern for any high-stakes agent review task

🛡️ Top Story 2: AutoHarness — Automated Agent Constraint Synthesis

Researchers introduced AutoHarness, a technique where LLMs automatically generate protective code harnesses around themselves to prevent illegal or invalid actions — without human-written constraints.

How It Works

- Uses iterative code refinement with environmental feedback to synthesise custom safeguards

- Harnesses make illegal states structurally unreachable, shifting safety from model behaviour to environment design

- Tested across 145 different TextArena games, generalising broadly

Key Findings

- In a recent LLM chess competition, 78% of Gemini-2.5-Flash losses were due to illegal moves — AutoHarness eliminates this failure class entirely

- Gemini-2.5-Flash + AutoHarness outperformed Gemini-2.5-Pro (unconstrained) while reducing costs — smaller + constrained beats larger + unconstrained

- Extends to zero-shot policy generation in code, removing runtime LLM decision-making entirely

- Achieves higher rewards than GPT-5.2-High on certain benchmarks

Practical Takeaways

- The core insight: auto-generate a verified harness rather than trusting a model to self-constrain

- Applies broadly to any agent deployment where invalid actions are a risk

- Significant cost efficiency gains available by pairing smaller models with strong harnesses

📰 Other Headlines (Paywalled — Titles Only)

| Item | What It Is |

| Perplexity Personal Computer | Always-on AI personal computer product launch |

| Cloudflare /crawl endpoint | Single-call web crawling API for agents |

| Context7 CLI | Brings up-to-date library docs to any agent |

| Andrew Ng — Context Hub | New tool/platform launch from Andrew Ng |

| Cursor Marketplace | 30+ new plugins added |

| OpenAI Skills for Agents SDK | New SDK for composable agent skills |

| Gemini Embedding 2 | Google's next-gen embedding model |

| Meta MTIA chips | Four AI chips shipped in two years |

| Codex agent taxes | Codex agent filed taxes and caught a $20K error |

🔗 Papers

- AutoHarness: Automated Agent Constraint Synthesis — Paper

Key Takeaways for AI Practitioners

- Multi-agent parallelism with verification is becoming the standard pattern for reliable AI review systems — Claude Code Review is a live example at scale

- Constraint-first agent design (AutoHarness) is a more cost-effective path to reliability than simply scaling up model size

- The industry is rapidly shipping agent-native infrastructure (Cloudflare /crawl, Context7, Cursor plugins) that lowers the barrier to building production agents

- Safety is increasingly being pushed into environment design rather than relying solely on model alignment