AI Agents of the Week: Papers You Should Know About (LLM Watch, Mar 08 2026)

Originally published on LLM Watch by Pascal Biese — March 8, 2026.

Summary

This week's LLM Watch covers significant advances in agent memory, planning, multi-agent collaboration, and evaluation — plus practical tools for agent developers.

Memory & Continual Learning Gains

Two complementary papers tackle how agents manage knowledge across extended interactions:

Memex(RL) introduces an indexed experience memory mechanism that addresses the fundamental context window bottleneck in long-horizon tasks. Rather than lossy summarization, it maintains compact indices while storing full-fidelity interactions in an external database, allowing agents to recover exact past evidence on demand.

SkillNet tackles the persistent problem of agents "reinventing the wheel" by providing infrastructure for creating, evaluating, and organizing over 200,000 reusable skills, improving average rewards by 40% and reducing execution steps by 30% across multiple benchmarks.

These complementary approaches — one preserving episodic memory, the other accumulating procedural knowledge — represent meaningful progress toward agents that learn cumulatively rather than forgetting everything between sessions.

Advances in Planning & Environment Interaction

HiMAP-Travel proposes a hierarchical multi-agent framework that splits planning into strategic coordination and parallel day-level execution, achieving a 52.78% validation pass rate on TravelPlanner — an improvement of +8.67 percentage points over sequential baselines while reducing latency 2.5x through parallelization.

T2S-Bench reveals that explicit text structuring through their "Structure of Thought" prompting technique yields +5.7% average improvement across eight text-processing tasks, with fine-tuning pushing gains to +8.6% — suggesting that how agents organize information internally matters as much as what information they access.

Multi-Agent Collaboration & Control

HACRL enables bidirectional mutual learning between heterogeneous agents through verified rollout sharing during training. Their HACPO algorithm outperforms GSPO by an average of 3.3% while using only half the rollout cost — a significant efficiency gain for multi-agent systems.

Vivaldi presents a role-structured multi-agent system for interpreting physiological time series. Key finding: agentic pipelines improve explanation quality for non-thinking models (+6.9 and +9.7 points) but can degrade performance for thinking models (14-point drop in relevance). This context-dependent picture challenges assumptions that agentic reasoning uniformly improves outcomes.

Trust, Verification & Safety

AgentVista introduces an ultra-challenging benchmark spanning 25 sub-domains where even the best model (Gemini-3-Pro with tools) achieves only 27.3% overall accuracy, with hard instances requiring more than 25 tool-calling turns. This sobering result highlights how far current agents remain from reliable real-world deployment.

Tools & Frameworks in Practice

DARE addresses the underutilization of R's statistical ecosystem by LLM agents through distribution-aware retrieval, achieving 93.47% NDCG@10 — outperforming state-of-the-art embedding models by up to 17% with substantially fewer parameters.

SkillNet also releases an interactive platform and Python toolkit alongside their 200,000-skill repository, providing immediately usable infrastructure for agent developers.

Key Takeaways

- Memory architecture matters: Indexed exact-recall (Memex) + reusable skill libraries (SkillNet) together represent a major step toward cumulative agent learning

- Parallel hierarchical planning (HiMAP) delivers both better outcomes and lower latency than sequential approaches

- Agentic reasoning isn't universally beneficial — context determines whether adding agents helps or hurts (Vivaldi)

- Current agents are still far from reliable deployment — 27.3% on a challenging benchmark is the best result (AgentVista)

- Domain-specific retrieval (DARE for R) significantly outperforms general-purpose embedding approaches

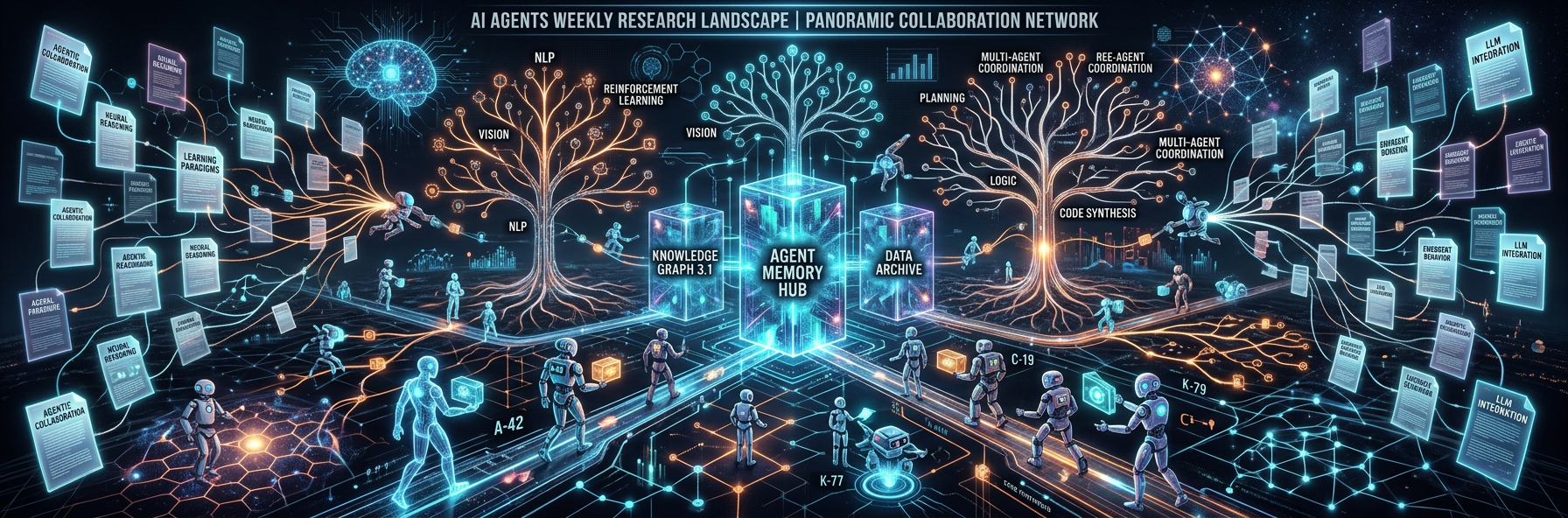

Infographics