80 out of every 200 employees exist to manage handoffs that agents are eliminating + the coordination tax audit to find yours

Original article: Read on Nate's Substack

Processed: March 13, 2026

Summary

Main Thesis

Every AI workforce impact study is making the same fundamental mistake: they survey roles, decompose them into tasks, assess which tasks AI can perform, and output a percentage. The methodology is clean — and the answer is dramatically wrong. The reason: the tasks knowledge workers perform today are not a natural set of things that need doing. They're artifacts of human coordination. When AI changes the execution substrate, the task distribution itself changes with it.

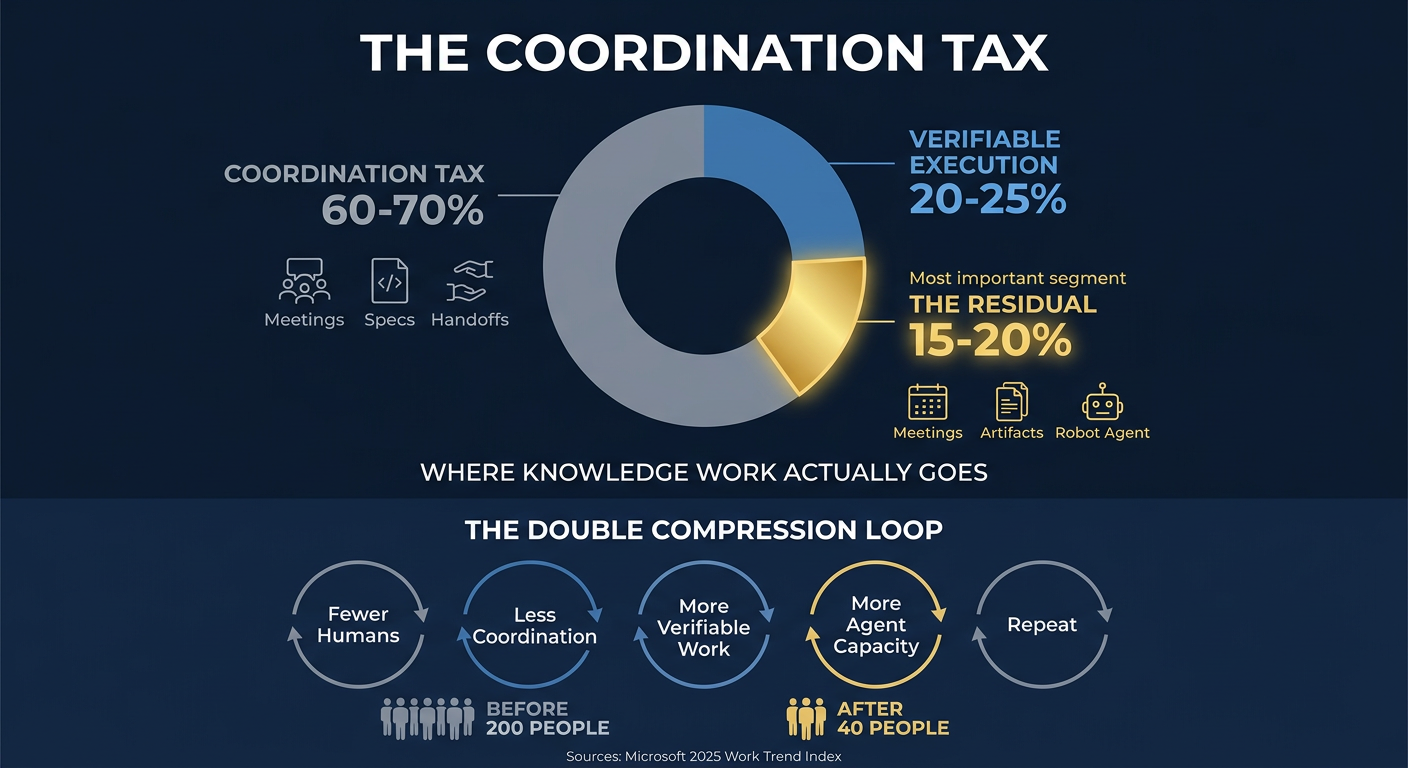

Nate's central argument is the Coordination Tax: in a typical 200-person tech company, 60–70% of all labour hours exist not to create value, but to manage the friction of humans working with other humans. Meetings, PRDs, specs, briefs, decks, status updates, cross-functional syncs — these artifacts exist because humans can't share a brain. Agents don't have that problem.

Key Data Points and Findings

The Coordination Tax by the numbers:

- Microsoft's 2025 Work Trend Index: average employee spends 57% of time communicating (meetings, email, chat) and only 43% creating

- Asana's Anatomy of Work study: 60% of time is "work about work"

- Average knowledge worker: 11.3 hours of meetings per week — nearly a third of the working week

- That meeting load has tripled since 2020

The workforce math for a 200-person company:

- 60–70% of labour hours = coordination → maps to 120–140 people primarily doing coordination

- Of the remaining 30–40%, roughly half is verifiable execution already within agent range

- Only 15–20% is genuine judgment residual (product vision, brand strategy, empathy, architecture)

- Net result: 40–60% of current headcount (80–120 people) exists to do work that's either already automatable or will be within 18 months

Real-world evidence:

- Salesforce cut customer support from 9,000 → ~5,000 after AI agents handled ~half of all customer interactions in under a year

- Block (Jack Dorsey) cut from ~10,000 → ~6,000, with the market rewarding the AI framing (+20% stock)

- Cursor's February 2026 update: developers can spin up 8 parallel cloud VM agents simultaneously, each delivering video demos and opening PRs when done

- Claude Code at $2.5B annualised revenue; Codex at 1.6 million weekly active users

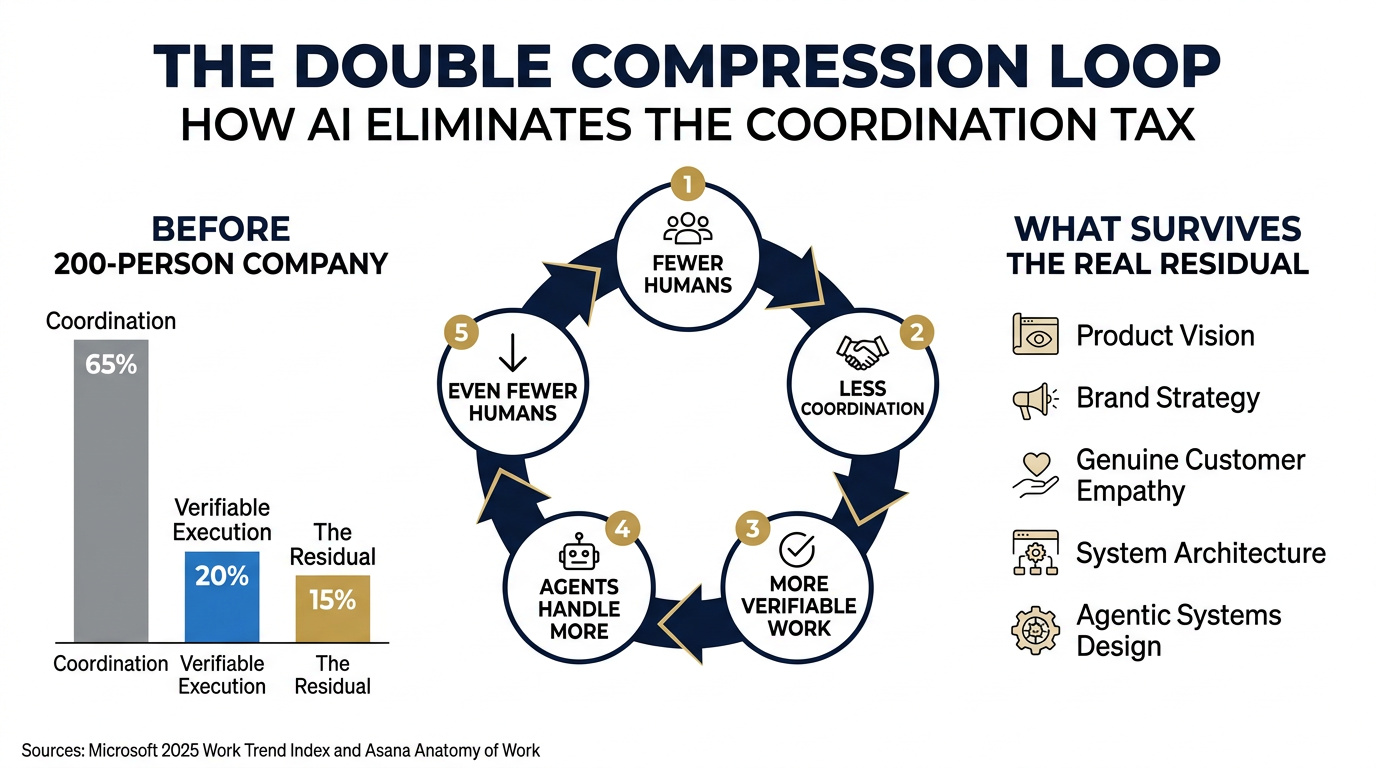

The Double Compression Loop

This is the structural argument that makes standard forecasts too conservative by a factor of 2–3x:

- Fewer humans in execution → less coordination needed

- Less coordination → fewer coordination roles

- Remaining work becomes more verifiable (expressed as code or code-like artifacts)

- More verifiable work → agents can handle more

- Agents handle more → even fewer humans needed → back to step 1

Each turn of this loop accelerates the next. The current task distribution is an artifact of the current org structure — change the structure and the tasks change with it.

What "Looks Like Judgment" But Isn't

Nate identifies a crucial conflation: we bundle genuine judgment under uncertainty with coordination overhead that feels like judgment because it's complex.

- "Strategic vision" decomposes into probabilistic bets with researchable evidence. The opacity of the synthesis process made it feel non-verifiable, not the nature of the decisions themselves.

- "Brand positioning" feels creative and subjective — but we measure it with NPS, sentiment, conversion. Make the feedback loops faster and it starts resembling A/B testing.

- "Reading the room" in sales: how much is truly interpersonal versus showing up with the right information, proposal structure, BATNA analysis, and customer political dynamics? If agents handle 90% of preparation, the human's judgment is applied in a narrower, higher-leverage context.

The pattern everywhere: what appears to be a single "judgment-heavy" role is almost always verifiable preparation work + a thin layer of genuine non-verifiable judgment on top. Agent harnesses unbundle them.

The Real Residual: What Actually Survives

Five categories of genuinely human work that agents can't yet replace:

- Product Vision — Not the PRD or feature spec, but the upstream conviction about what the product is and who it's for. The willingness to kill a working feature because it conflicts with the 2-year direction.

- Brand as Thought Work — Not the brand guidelines doc (that's a spec; agents execute it). The deep strategic thinking about what a company means. What makes Patagonia "environmental activism that sells jackets" rather than "outdoor apparel."

- Genuine Care in Customer Relationships — Not ticket resolution (automated). The judgment that says: this customer is telling us one thing but needs another. Empathy isn't a metric.

- Engineering Architecture — The structural bet that says: given where this system needs to be in 3 years, this is the right foundation. A commitment that constrains everything downstream, made with incomplete information, whose quality only becomes apparent years later.

- Agentic Systems Design — An entirely new discipline: defining task decomposition strategies, designing verification criteria, choosing what gets delegated vs. retained, debugging multi-agent failure modes over long time horizons. Nate argues this will be the most important operational competency in technology within two years.

The Human Dimension

Nate is explicit that the coordination tax wasn't just inefficient — it was dehumanising. It buried smart, creative, ambitious people in synchronisation overhead until they forgot what drew them to their field.

The two qualities separating people who are thriving in this transition from those who aren't:

- Agency: The posture that says this is a skill issue and I can learn it. Not panic, not denial — stubborn confidence that the gap is closeable.

- Ramp: The ability to learn quickly. Curiosity-driven. Diving into something unfamiliar and building competence before you have permission or a roadmap.

Prompt Kit

Source: Grab the prompts

This kit turns the article's "coordination tax" framework into a personal career audit. Run these four prompts in sequence — each builds on clarity from the one before.

Prompt 1: The Coordination Tax Audit

Job: Decomposes your actual work week into coordination overhead, verifiable execution, and genuine judgment work — with exact hour counts and percentages.

When to use: When you want an honest picture of how you actually spend your time, not how your job description says you should.

What you'll get: A categorised time breakdown of your typical week, a coordination tax percentage, and a clear-eyed comparison between your role as described and your role as lived.

What the AI will ask you: Your job title and function, a walkthrough of your typical week's activities (meetings, artifacts you produce, actual creation work), and what your role's "point" is — the value it ultimately exists to deliver.

Prompt 2: Role Vulnerability Assessment

Job: Maps your role's structural exposure — not just which tasks AI can do, but which tasks will stop existing when the coordination layer collapses.

When to use: After completing the Coordination Tax Audit (Prompt 1), or when you want to understand how durable your current role is against organisational restructuring, not just task automation.

What you'll get: A three-layer vulnerability map of your role, a structural exposure score, and an honest assessment of what happens to your function when coordination overhead drops by 80%.

What the AI will ask you: Your Coordination Tax Audit results (or your role details if starting fresh), what your company builds, how your team is structured, and how directly you touch the product or the customer.

Prompt 3: The Residual Skills Inventory

Job: Identifies and stress-tests the "real residual" capabilities you already have — the skills that survive the coordination collapse — and exposes the gaps you need to close.

When to use: After completing the Vulnerability Assessment (Prompt 2), or when you want to take stock of which high-durability skills you actually possess versus which ones are aspirational.

What you'll get: A personal inventory mapped against the five durable skill categories from the article, with honest ratings of depth in each, evidence from your own experience, and a gap analysis showing where you're strong, underdeveloped, or have no foothold at all.

What the AI will ask you: Your career history and proudest work, examples of decisions you've made under genuine uncertainty, how you relate to product/customer/craft, and your current relationship with AI tools.

Prompt 4: The 90-Day Repositioning Plan

Job: Converts your audit results, vulnerability assessment, and skills inventory into a concrete 90-day action plan to shift your career toward the durable residual.

When to use: After completing any or all of the first three prompts, or when you're ready to stop analysing and start moving.

What you'll get: A week-by-week plan with specific actions to shed coordination overhead, deepen residual skills, build agentic fluency, and position yourself for the compressed organisation — plus a decision framework for whether to reposition within your current role, company, or somewhere new.

What the AI will ask you: Your results from the prior prompts (or your situation if starting fresh), your current constraints, your risk tolerance, and what you actually want your work to feel like.

Infographics