55% of employers regret AI-driven layoffs. The agents are good at tasks and terrible at jobs. Here's what that means for your team and the 3 prompts that close the gap.

Original article: Read on Nate's Substack

Date processed: 2026-03-23

Summary

Main Thesis

AI agents are increasingly capable at discrete tasks, but they remain fundamentally bad at jobs — the continuous, context-rich, institutionally-aware work that keeps organisations running. A new wave of research confirms this isn't a prompting problem; it's a structural one. The gap between an agent's two-hour memory and an expert employee's multi-year institutional knowledge is causing agent failures that are not random — they are predictable and preventable.

Key Data Points & Findings

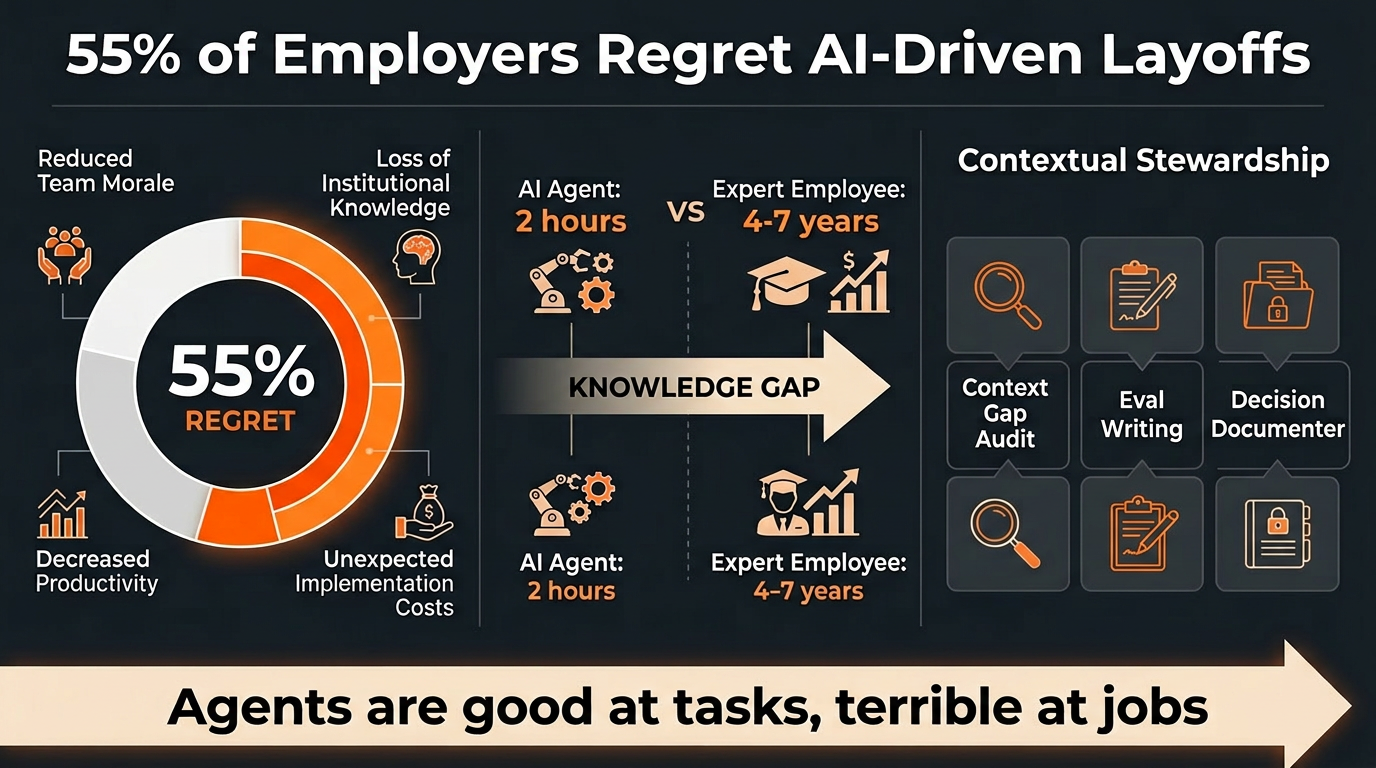

- 55% of employers who made AI-driven layoffs report regretting the decision.

- Average software job in America lasts 18 months–2 years; average AI agent run lasts ~2 hours.

- Expert employees who hold institutional context often stay 4–7+ years.

- Alexey Grigorev's AI coding agent wiped 1.9 million rows of production data — every action was locally correct, but the agent had no idea it was operating on live infrastructure.

- Three new studies confirm this is a repeating pattern, not a one-off.

- Two benchmarks measuring the same AI models got wildly different results — the reason exposes a core confusion in the agent discourse about task performance vs. job performance.

- Harvard studied 62 million workers; the data doesn't say what the AI-optimist headlines claim.

Key Arguments

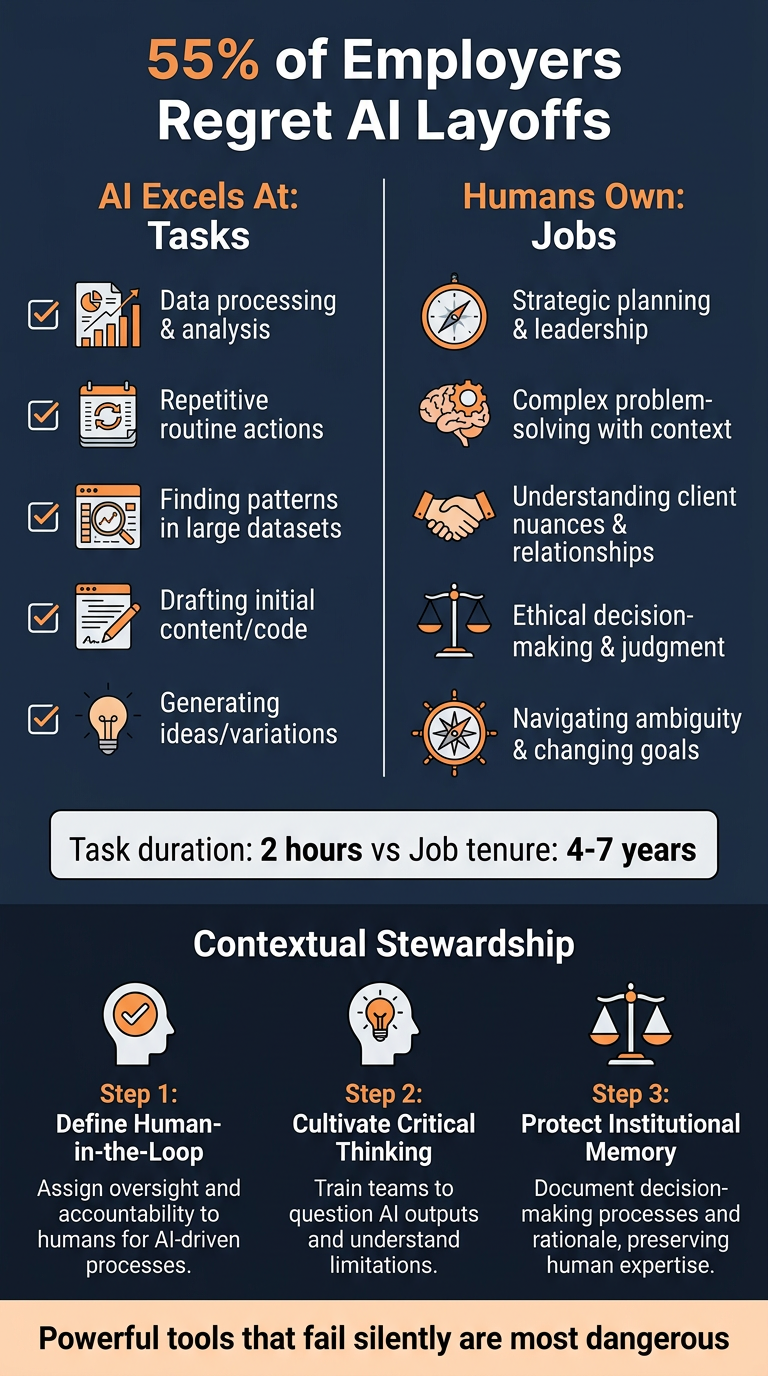

- The task-vs-job gap is the real problem. Agents excel at bounded, well-specified tasks. Jobs require judgment about context that exists only in human heads.

- More powerful tools fail more dangerously. A mediocre tool that fails obviously is annoying. A powerful tool that fails silently is dangerous — and agents are now powerful enough to cause catastrophic silent failures.

- Cursor's "AI built Excel from scratch" story has a buried detail that most coverage ignores: the part that made it work was human contextual oversight, not the AI's raw capability.

- The invisible skill the labour market is already paying for is contextual stewardship — and most organisations are getting the investment exactly backwards by assigning eval-writing to junior staff.

Practical Takeaways

- Contextual stewardship is the emerging human role: understanding what the agent doesn't know it doesn't know, and building infrastructure to catch those blind spots.

- Your best, most experienced people should be writing evals — not your most junior.

- The highest-leverage investment is not better prompts or bigger context windows; it's human judgment about what matters, what's fragile, and what the AI doesn't know.

Prompts Included

- Context Gap Audit — surfaces the institutional knowledge your agents don't have access to

- Eval Writer for Non-Engineers — generates evaluation criteria based on real work patterns

- Decision Documenter — captures reasoning and context that agents will never know on their own

Infographics